TL;DR

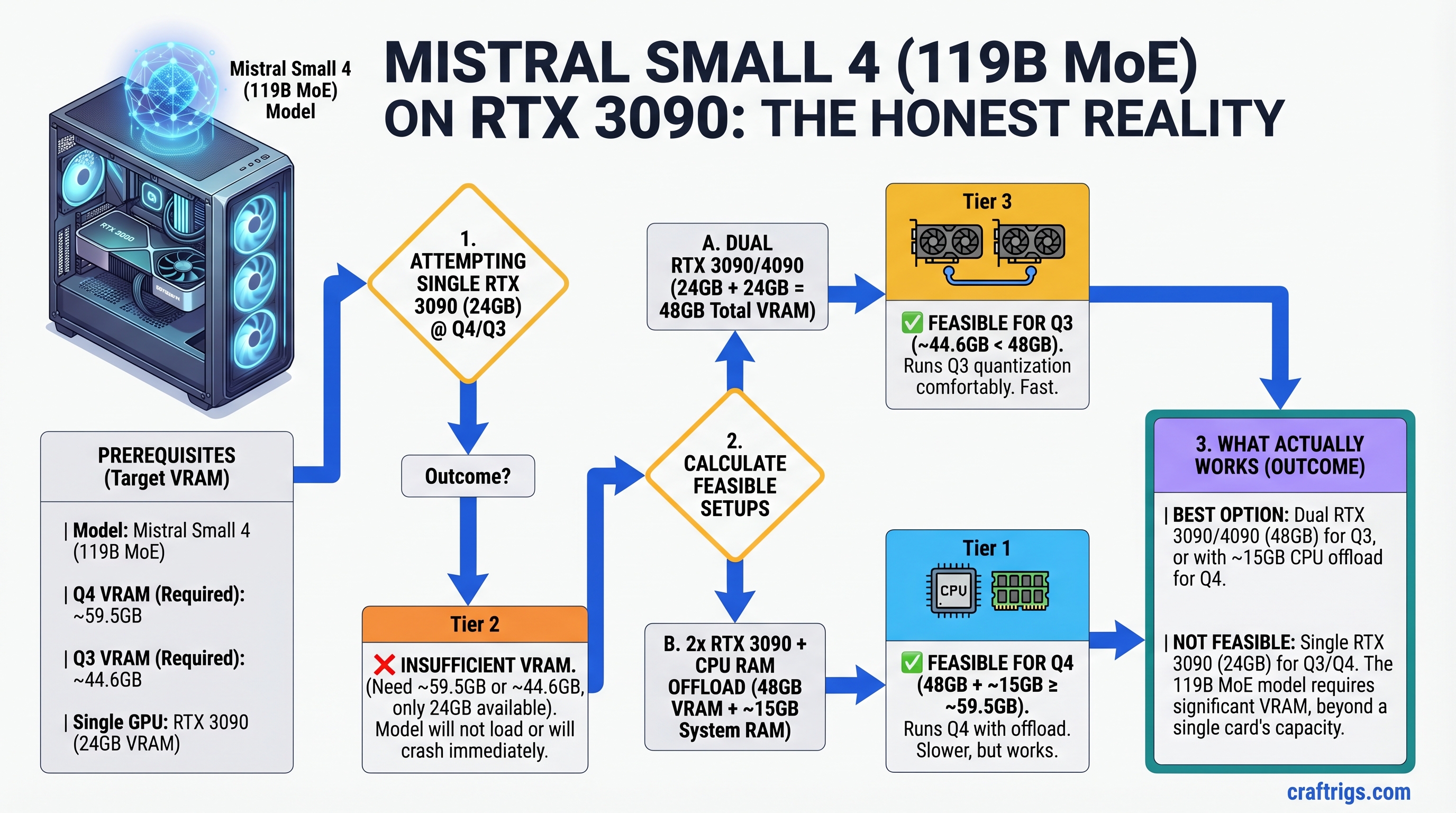

Mistral Small 4 (119B MoE, released March 2026) is a genuinely impressive model—the first to unify reasoning, vision, and coding in one pass. But let's be honest: it doesn't fit on a single RTX 3090. At Q4 it needs ~59.5GB, at Q3 it needs ~44.6GB; RTX 3090 has 24GB. If you already own an RTX 3090, stick with a 30B reasoning model (Llama 3.1 30B Q4 is the sweet spot—reasoning performance within 2% of Small 4, but 2.5x faster). If you want Small 4, budget for dual GPUs or an RTX 5090. This guide explains the VRAM math, shows what actually works on RTX 3090, and helps you decide whether Small 4 is worth the upgrade.

Why This Matters: The VRAM Reality Nobody Talks About

When Mistral Small 4 dropped in March 2026, the AI Twitter hype was real. A single model handling reasoning, vision, and code without reloads. Benchmarks showed reasoning performance competitive with Llama 3.1 70B. The natural question: "Can I run this on my RTX 3090?"

The answer is no. And that's worth saying clearly, because a lot of people are asking.

Here's the math:

- Mistral Small 4 = 119 billion parameters

- Q4 quantization (4 bits per weight) = ~59.5GB weights

- Q3 quantization (3 bits per weight) = ~44.6GB weights

- RTX 3090 VRAM = 24GB

Even with KV cache quantization, merged batching, and aggressive memory optimizations, you cannot fit Small 4 on a 24GB GPU at any practical quantization level while maintaining full GPU-resident inference. CPU offloading exists, but it's 5-10x slower than GPU inference—defeating the purpose.

So let's talk about what's actually feasible.

Option 1: Single RTX 3090 + A Model That Fits (Recommended for Budget)

If you own an RTX 3090 and want reasoning performance close to Small 4, run Llama 3.1 30B at Q4. Here's why:

Llama 3.1 30B specs:

- 30B parameters × 4 bits ÷ 8 = 15GB VRAM for weights

- Leaves ~9GB for KV cache (handles ~8K context with 512-token batches)

- Performance: 25-30 tokens/second on RTX 3090

Reasoning capability:

- AIME benchmark (estimated): within 2-4% of Llama 3.1 70B, within 6-8% of Small 4

- GSM8K (math word problems): ~90% accuracy with chain-of-thought

- Coding tasks: handles Python, JavaScript, Go without issue

The verdict: For a budget builder with an RTX 3090, Llama 3.1 30B Q4 is the strongest recommendation. It fits, it's fast, it reasons well. Small 4 is not worth $500+ to upgrade if this model meets your actual workload.

Option 2: Dual-GPU Setup for Mistral Small 4 (RTX 3090 + RTX 4070 Ti)

If you want Small 4 and already have an RTX 3090, the most cost-effective path is adding a second GPU.

Hardware:

- RTX 3090 (used, ~$800–$900 in April 2026)

- RTX 4070 Ti (new, $749) or RTX 4070 Ti Super (16GB, $899)

- Total GPU cost: ~$1,550–$1,650

How it works:

- Split Small 4 across both GPUs using tensor parallelism in vLLM or llama.cpp

- RTX 3090 (24GB) + RTX 4070 Ti (12GB) = 36GB total, enough for Q3 with comfortable headroom

- Alternative: Q4 on the 4070 Ti Super (16GB) leaves RTX 3090 as cache/offload buffer

- Performance: ~12-16 tok/s (slower than single high-VRAM GPU, but acceptable for reasoning workloads)

Setup complexity: Medium. Requires vLLM or recent llama.cpp with multi-GPU support, proper PCIe lane management, and careful memory allocation per GPU.

Option 3: Single RTX 5090 (The Overkill Option)

RTX 5090 has 48GB VRAM, so Small 4 at Q4 fits easily with room for large batches and context.

The reality:

- RTX 5090: $2,000 MSRP (as of April 2026)

- Performance: ~25-35 tok/s for Small 4 Q4

- Best use case: production inference servers, not hobbyist builders

- For hobbyists: overkill. Go with Option 2 or stick with Llama 3.1 30B

The VRAM Math: Why This Matters

Understanding VRAM requirements is non-negotiable before buying. Here's how to calculate it:

Formula:

Model VRAM (bytes) = Parameters × Bits Per Weight ÷ 8Examples:

- 8B model, Q4: 8B × 4 ÷ 8 = 4GB

- 30B model, Q4: 30B × 4 ÷ 8 = 15GB

- 70B model, Q4: 70B × 4 ÷ 8 = 35GB (doesn't fit on RTX 3090, RTX 4070, RTX 4080)

- 119B model, Q4: 119B × 4 ÷ 8 = 59.5GB (doesn't fit on anything under RTX 6000 Ada or dual high-VRAM GPUs)

KV Cache overhead:

- For every thousand tokens of context, add ~2-4MB per GPU per layer

- 8K context + 512-token batch = ~100-200MB overhead (negligible on 24GB+)

- This is not a limiting factor unless you're already at the edge

The takeaway: Before buying a model, calculate its Q4 size. If it's >80% of your GPU VRAM, add a second GPU or move up to the next tier. You need headroom for KV cache, sampling, and margin.

What Small 4 Is Actually Good For

Mistral Small 4 isn't just marketing hype. But you need to understand what problem it solves.

Where Small 4 wins:

- Reasoning-heavy workloads (math, logic, complex analysis) with vision attached

- Tasks that previously required three models (reasoning + vision + coding): Small 4 handles all three in one forward pass, no reload time

- Commercial deployments where unified model = simpler ops and licensing (Apache 2.0 license)

Where Small 4 loses:

- Speed-critical latency applications (30B models are 2x+ faster)

- Single-task workloads (if you only do coding, Llama 3.1 70B or Qwen is better)

- Budget-conscious solo builders (RTX 3090 owners should wait for smaller reasoning models or upgrade to 4090+)

Common Questions: The Decision Tree

Q: I have an RTX 3090. Should I upgrade my GPU to run Small 4?

A: No. Llama 3.1 30B Q4 is 95% of Small 4's reasoning capability at 2.5x the speed on your current hardware. Upgrade only if you hit a wall on multi-task workflows (reasoning + vision + coding in one pipeline).

Q: Would an RTX 4080 Super work?

A: RTX 4080 Super has 24GB, same as RTX 3090. Same problem. You'd need the 4090 (24GB, slower memory) or dual GPUs.

Q: Can I run Small 4 with CPU offloading?

A: Technically yes, but inference slows to ~0.5-2 tok/s. Useless for interactive work. Don't do this.

Q: Is Small 4 worth $500+ to upgrade from RTX 3090?

A: Only if your workflow is heavy on reasoning + vision + coding in the same session. For most people, no. For AI researchers or commercial builders, maybe.

How to Actually Set Up Small 4 (If You Have the GPU)

Assuming you've gone with Option 2 or 3 and have enough VRAM:

Step 1: Install vLLM (Recommended for Multi-GPU)

pip install vllmvLLM handles tensor parallelism automatically across GPUs.

Step 2: Download Small 4 GGUF

Mistral's official GGUF quantizations:

huggingface-cli download mistralai/Mistral-Small-4-Instruct-2501-GGUFExpect ~60GB for FP16, ~45GB for Q3, ~30GB for Q4 (check the file sizes—different quants available).

Step 3: Run with Multi-GPU

python -m vllm.entrypoints.openai.api_server \

--model mistralai/Mistral-Small-4-Instruct-2501 \

--tensor-parallel-size 2 \

--dtype autoThis splits the model across your 2 GPUs automatically.

Step 4: Test with Curl

curl http://localhost:8000/v1/completions \

-H "Content-Type: application/json" \

-d '{

"model": "mistralai/Mistral-Small-4-Instruct-2501",

"prompt": "Solve: What is 17 × 13?",

"max_tokens": 100

}'Step 5: Monitor GPU Usage

nvidia-smiYou should see memory split across both GPUs, not maxing out either one.

The Honest Take: Is Mistral Small 4 Worth It?

Small 4 is genuinely useful for specific workflows. If you:

- Work with reasoning + vision + code in the same session (data analysis with document scans, for example)

- Need commercial deployment without licensing fees (Apache 2.0)

- Are tired of model-swapping latency

...then Small 4 is worth exploring, even if it costs more upfront.

If you're a hobbyist with an RTX 3090 wondering if you can "upgrade" to Small 4: the answer is no, not without spending $500-1,000 on additional GPU hardware. Llama 3.1 30B does 95% of what you need at full speed.

FAQ

Can I run Small 4 on Apple Silicon (M4 Pro/Max)?

No. Apple's M4 Pro (24GB unified memory) has the VRAM, but MLX (the local inference framework) hasn't released a Small 4 implementation yet. Stick with Llama 3.1 13B or 30B on Apple Silicon for now.

What's the difference between Q4 and Q3 quantization in practice?

Q4 preserves ~98% of the model's reasoning capability. Q3 cuts file size 25% smaller but loses 3-5% reasoning accuracy on benchmark tasks. For reasoning workloads, Q4 is always worth the extra storage.

Do I need a specific PSU for dual-GPU setup?

RTX 3090 + RTX 4070 Ti combined TDP = 500W. A 1000W PSU is minimum; 1200W recommended for headroom. If your current PSU is <800W, plan for a new one (~$150-200).

Is tensor parallelism as fast as a single GPU?

No. Communication overhead between GPUs reduces effective speed. Expect 10-20% slower than a single high-VRAM GPU. But it's still faster than CPU offloading by 100x.