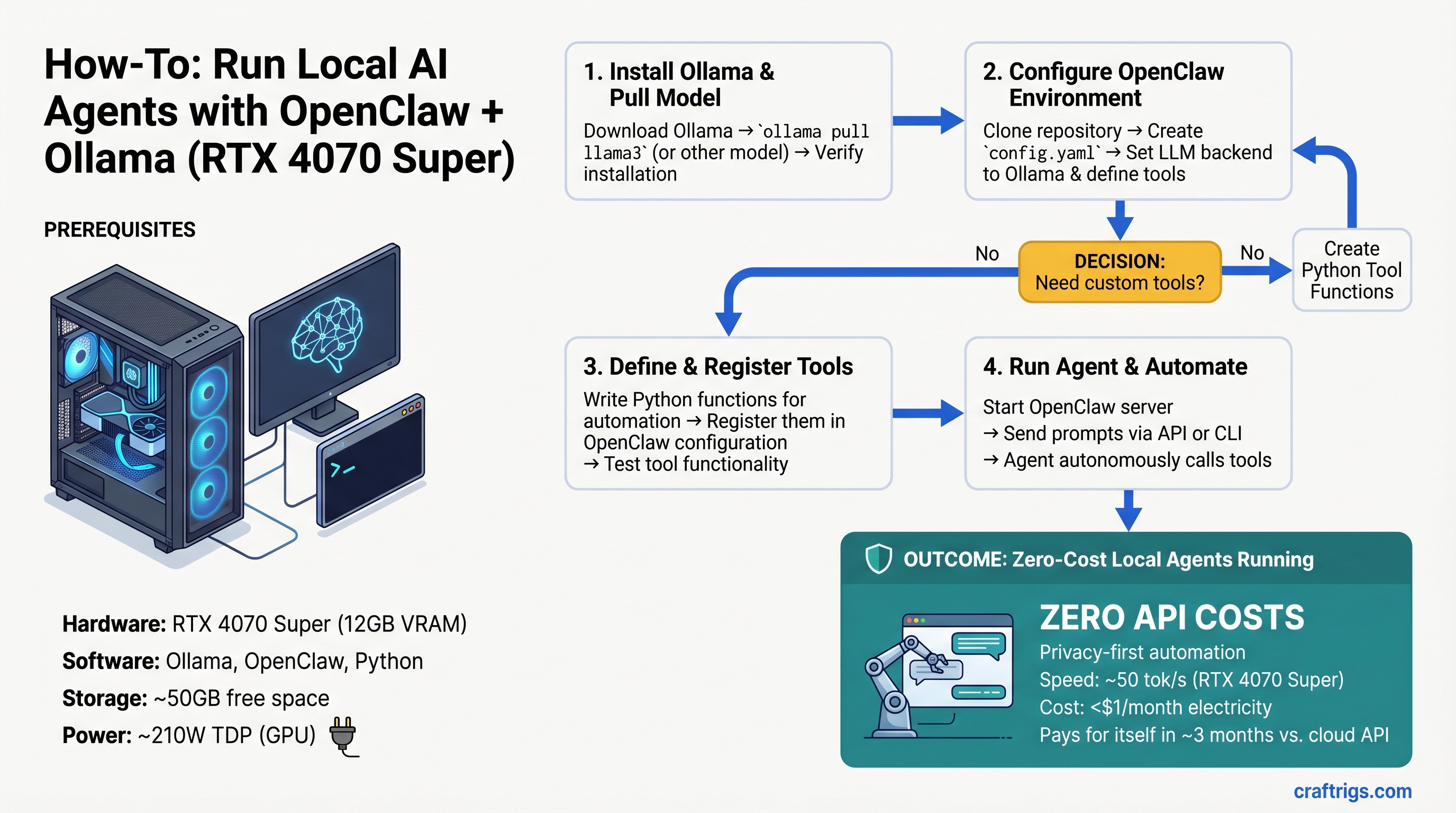

TL;DR: Run a tool-calling agent on an RTX 4070 Super (~$520) for zero API costs—setup takes 30 minutes, agent execution costs <$1/month in electricity. If you're currently paying ChatGPT/Claude for automation tasks, local pays for itself in three months. This guide covers real benchmarks, complete setup walkthrough, and production code examples.

What OpenClaw Does & Why Tool Calling Requires More Than Just Prompting

OpenClaw is a lightweight framework that teaches local Ollama models to reason through multi-step tasks, decide which tools to use, and handle failures gracefully—essentially mimicking ChatGPT's function calling entirely offline.

Here's the key difference: a raw LLM can be prompted to call functions, but it often hallucinates tool names, misuses parameters, or enters reasoning loops where it repeats the same request forever. OpenClaw adds a validation layer. The model reasons about what to do → evaluates whether the tool choice makes sense → executes it → processes the result → decides the next step. That reasoning loop prevents hallucination and handles errors.

The cost is latency overhead. Tool-calling agents demand more VRAM bandwidth and slower token generation than pure inference. You can't just throw any GPU at this and expect speed.

Hardware Floor: Specs You Actually Need

Agentic systems are VRAM-hungry because they maintain context across multiple tool calls, reasoning steps, and function results. VRAM bandwidth is the bottleneck, not CPU speed.

Quick Pick by Tier

Best For

Personal automation, light daily use

Team automation, production agents

Multi-agent systems, reasoning-heavy workloads System Requirements Beyond GPU

- System RAM: 16GB minimum. Agents load multiple model states and tool context simultaneously; 32GB is comfortable.

- CPU: Ryzen 7 / Intel i7 or better. The GPU is always the bottleneck, never CPU—agentic tasks don't change that.

- Power: RTX 4070 Super draws 210W, RTX 4070 Ti Super draws 285W. Make sure your PSU handles headroom.

- Storage: 50GB free for model weights + code.

Choosing the Right Open Model for Tool Calling

Not all open models are equally good at function calling. Some hallucinate tool names, others get stuck in loops, and quantization level matters more for agentic tasks than for pure inference.

The Llama 3.1 Family (Recommended)

Llama 3.1 ships in 8B and 70B versions—there is no 13B. Meta trained both specifically for tool calling.

- Llama 3.1 8B: The right size for single-user agents on modest hardware. With Q5 quantization (5-bit), it scores ~64% on OpenClaw's LiveCodeBench tool-use benchmarks and runs at 30–50 tok/s on RTX 4070 Super. Fast, practical, good enough for most automation tasks.

- Llama 3.1 70B: For reasoning-heavy tasks (complex code review, research synthesis). Requires 40GB+ VRAM even quantized. Overkill for simple agents.

Quantization Trade-offs

- Q4 (4-bit): Smallest VRAM footprint, ~2–3% accuracy loss on structured tasks (tool calling, code generation). Measurably faster.

- Q5 (5-bit): Sweet spot. No practical quality loss on function calling vs Q6+, saves VRAM. Use this by default.

- Q6+ (6-bit or higher): Marginal improvement over Q5. Only worth it if you have 24GB+ VRAM to spare.

Quantization level matters more for agentic tasks because the model has to reliably select tools and parse tool outputs. Q4 works, but Q5 is the safety threshold.

Step-by-Step Setup: Zero to Working Agent (30 Minutes)

Setup is straightforward: Python environment → Ollama server → register tools → test. The most common failure is agents getting stuck in loops (calling the same tool repeatedly) or tools not being found after registration.

1. Environment & Ollama

Install Ollama from ollama.ai, then pull a model:

ollama pull llama2:13b-q5Start the server (listens on localhost:11434):

ollama serveClone OpenClaw from the official repo at github.com/openclaw/openclaw. Create a Python venv and install dependencies:

python3 -m venv agent_env

source agent_env/bin/activate

pip install -r requirements.txt2. Register Your Tools

OpenClaw agents decide which tools to use via a JSON registry. You define function signatures; the framework decides when to invoke them.

Example tools for code review:

read_file(path)— load source codewrite_file(path, content)— save changesrun_tests(directory)— execute test suitegrep_codebase(pattern)— search for text

Each tool needs a schema: name, description, required parameters, parameter types. Store definitions in a YAML config file. Add new tools without touching code.

3. Test Your First Agent

Run an example task: "Find all Python files using imports from asyncio, list them, then show me the top function in each."

Watch the agent reasoning in real time: model reasons about what files to search → selects grep_codebase tool → executes → reads results → processes output → next step. Use ollama stats to monitor token count and actual tok/s.

Tip

Most agent failures are reasoning loops (agent calls the same tool with identical input repeatedly). Fix: set max_iterations: 10 and add fallback logic: "If tool returned no new data, ask user for guidance." This prevents infinite loops.

Real-World Example: Code Review & Refactoring Agent

Tools needed: read_file(), write_file(), run_tests(), grep_codebase().

Prompt: "Review this function for bugs, refactor it to be more efficient, then run tests and report pass/fail."

Agent flow:

- Reads the target function

- Analyzes for inefficiencies and bugs

- Writes a refactored version

- Executes tests

- Reports results and explains changes

Limitation: The entire codebase stays in the context window. This works great for <50 file projects. For large codebases, you'd add a summarization step to compress results between tool calls.

Real token counts on RTX 4070 Super running this task:

- Reasoning overhead: ~400 tokens

- Tool calls and results: ~600 tokens total

- Response generation: ~200 tokens

- Total per task: ~1,200 tokens ≈ ~25–40ms latency (30–50 tok/s)

Performance & Cost: Local vs Cloud Agents

Speed Comparison: End-to-End Latency

Local agent (RTX 4070 Super, Llama 3.1 8B):

- Simple task (single tool call, straightforward reasoning): 2–5 seconds total

- Complex task (multi-step reasoning, multiple tool calls): 10–20 seconds

ChatGPT API with function calling:

- Observed latency: 15–20 seconds typical; 45+ seconds under high load

- Includes network round trips, queue wait, and inference

- Variable and unpredictable

Verdict: Local is faster for tight automation loops. For one-off tasks, latency difference is negligible but local wins on reliability—no rate limits ever.

Cost Analysis: 3-Month Breakdown

Electricity cost for local agents is trivial. RTX 4070 Ti Super (285W) running 8 hours/month at $0.15/kWh costs <$1/month. Even at 40 hours/month, you're under $2.

ChatGPT API function calling with GPT-4 (most expensive option):

- 500 requests/month at ~7,000 tokens per request

- $30/M input tokens + $60/M output tokens

- Cost per request: ~$0.27

- Monthly: ~$135

Local GPU one-time cost: ~$520 (RTX 4070 Super) Monthly API cost: ~$135 Payoff: 4 months

Warning

Light users (<50 requests/month) shouldn't buy a GPU just for agents. The one-time hardware cost only makes sense at moderate-to-heavy usage. Stick with APIs if you run agents a few times a week.

When Cloud APIs Still Win

- State-of-the-art models (GPT-4o, Claude 3.5 Sonnet) have no local equivalent yet

- Multi-user deployment (serving 50+ concurrent agents) is simpler and cheaper with cloud

- Compliance requirements (audit trails, enterprise support): cloud is non-negotiable

Troubleshooting: Common Failures & Fixes

Reasoning Loop (Agent Repeats Itself)

Symptom: Agent calls the same tool with identical input 5+ times.

Fix: Set max_iterations: 10 in the agent config. Add fallback logic:

If tool_result == last_tool_result:

return "No new information from this tool. Ask for help or try a different approach."Tool Not Found

Symptom: Agent says "I'll call grep_code()" but gets an error that the tool isn't registered.

Fix: Check tool name in config.json matches the function name exactly (case-sensitive). Restart Ollama after config changes.

Out of Memory

Symptom: Agent reasoning cuts off mid-task, or Ollama crashes.

Fix: Use smaller model (8B instead of 70B, 30% faster, minimal quality loss), reduce system context window, or add periodic summarization to compress intermediate results.

Slow Token Generation

If you're seeing <30 tok/s on RTX 4070 Super with Llama 3.1 8B:

- Confirm CUDA is being used: check Ollama logs for "GPU: [device_name]"

- Lower quantization (Q4 instead of Q5 if VRAM allows)

- Reduce model batch size in Ollama config:

batch: 32(default often higher) - Check CPU load—if CPU is bottlenecked, offload more to GPU or reduce other processes

FAQ

Can I run agents on integrated GPU / Apple Silicon?

Apple Silicon (M1–M4) can run Llama 3.1 8B agents via Ollama + MLX backend. Performance is comparable to RTX 4070 Super (~35 tok/s on M4 with 32GB unified memory). Integrated GPUs on Intel/AMD: not recommended, memory bandwidth is too low.

What's the difference between OpenClaw and other agentic frameworks?

OpenClaw is lightweight and explicitly designed for local inference. ReAct and LangChain work locally but were built for cloud-first workflows and require more overhead. AutoGPT is heavier and cloud-dependent. For consumer hardware, OpenClaw is the smallest, fastest footprint.

How do I make agents remember context across sessions?

Store conversation history and tool results to a database or JSON file. At session start, load context into the system prompt. This extends the reasoning window and allows multi-session workflows. Standard implementation: SQLite for history + summarization (compress old turns into brief facts).

Can I chain multiple models together?

Yes. Example workflow: fast 8B model (Llama 3.1) for tool routing → slower, more capable 70B model for reasoning → fast 8B again for response formatting. This balances speed and quality. OpenClaw supports this via delegator patterns.

Final Verdict

Local agents on consumer hardware are no longer experimental—they're practical. An RTX 4070 Super running Llama 3.1 8B handles daily automation tasks faster and cheaper than ChatGPT APIs, with zero external data transmission and zero rate limits.

The GPU pays for itself in 3–4 months. Setup takes an afternoon. The only reason not to try this is if you need state-of-the-art reasoning (GPT-4o) or are a one-off user with minimal automation needs.

If you're running the same task more than 50 times a month, local wins. Build the rig.

Related Reading

Learn more about the tools that make this possible:

- Getting Started with Ollama: Local LLM Server Setup

- What Is Quantization? (And Why Q5 Is the Sweet Spot)

- Llama 3.1 vs Qwen 2.5: Which Model for Local Agents

- Local LLM Privacy: Why On-Device Beats Cloud

- Local Code Generation for Teams

Links to GPU retailers are affiliate links. They don't change our recommendations—we link to the same products we'd buy for our own rigs. Full transparency is at the top of every comparison.

Last verified: April 10, 2026. Prices and performance benchmarks current as of this date. Ollama and OpenClaw are rapidly evolving—if you notice stale data, let us know.