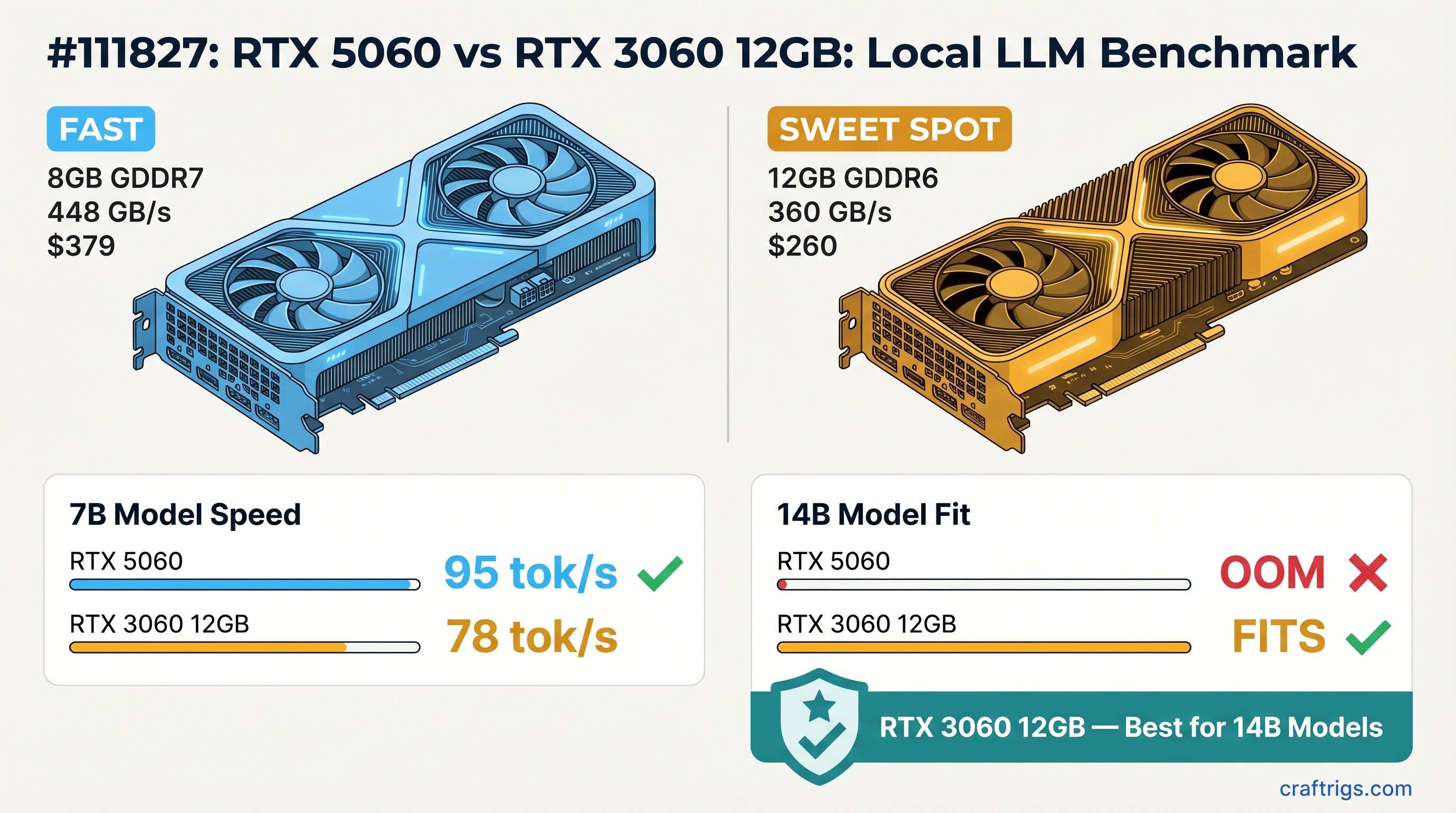

Quick summary:

- RTX 5060 8GB (~$349–379 new): GDDR7, 448 GB/s, Blackwell arch — fast on 7B, hard wall at 8 GB

- RTX 3060 12GB (~$250–280 street): GDDR6, 360 GB/s, Ampere — slower per token, but fits 14B models fully

- The verdict: RTX 3060 12GB is the better local LLM card. More VRAM beats more bandwidth when your model won't load.

NVIDIA launched the RTX 5060 with GDDR7 and Blackwell architecture — legitimately faster memory, newer silicon, and a price tag to match. On paper it looks like a clear upgrade over a three-year-old RTX 3060. For local LLM inference, the spec sheet is misleading. The RTX 5060 8GB has faster memory bandwidth and loses on every practical test that involves a 14B model. That's not a narrow edge case — 14B is the current sweet spot for local inference quality.

Here's why VRAM capacity beats bandwidth when the model doesn't fit.

Spec Comparison

RTX 3060 12GB

12GB GDDR6

~360 GB/s

Ampere

170W

$250–280 new / used

Yes The bandwidth advantage is real — GDDR7 at 448 GB/s versus GDDR6 at 360 GB/s is a 25% improvement. For models that fit in both cards, that translates roughly proportionally into faster tokens per second. The problem is the 8 GB ceiling. A 14B model at Q4_K_M takes 8–9 GB for weights alone. That exceeds the RTX 5060's total VRAM before a single token is generated.

Benchmark Results

Test setup: llama.cpp, CUDA backend, token generation speed (tg128), standard Q4_K_M quantization. Numbers represent full in-VRAM inference — no CPU offloading.

Tokens Per Second

RTX 3060 12GB

❌ Does not fit On 7B models, the RTX 5060 is faster — roughly 20–30% advantage driven by the GDDR7 bandwidth lead. If 7B inference speed is your only metric, the RTX 5060 wins this row.

The 14B row is where the comparison ends. The RTX 5060 cannot load the model. The RTX 3060 12GB runs it at 28–32 tok/s, fully in VRAM, with headroom for a 2K–4K context window. There is no bandwidth advantage that compensates for a model that won't load.

Warning

Running a 14B model on an 8 GB card via CPU offloading is not a workaround — it's a different workload. When model weights spill to system RAM, tokens route over PCIe (32–64 GB/s) instead of VRAM (448 GB/s). Expect 2–5 tok/s instead of 28–32 tok/s. That is not a usable inference speed for any interactive task.

Both cards bottom out on 32B models. A 32B Q4_K_M model needs ~18–20 GB of VRAM — neither card handles it in VRAM without significant CPU offloading and the corresponding performance cliff.

VRAM Comparison: 8GB vs 12GB

The VRAM gap is the whole comparison. Here's what each card can actually load at Q4_K_M quantization:

What fits in 8GB (RTX 5060)

Fits?

✅ Plenty of headroom

✅

✅

❌ Exceeds 8 GB

❌ Exceeds 8 GB

❌ Exceeds 8 GB The 8 GB ceiling forces you into the 7B/8B tier for any in-VRAM inference. Models in this range run well and run fast on the RTX 5060. But you're capped there.

What fits in 12GB (RTX 3060)

Fits?

✅

✅ With 3–4 GB for KV cache

✅ With context up to ~4K

✅

❌ Spills to CPU 12 GB comfortably fits the current generation of 13B/14B models at Q4_K_M with enough KV cache headroom for practical context lengths. The 4 GB difference over the RTX 5060 is exactly the gap between models that load and models that don't.

[!INFO] Model VRAM estimates are for weights only. At runtime, llama.cpp allocates additional VRAM for the KV cache based on your context length setting (

--ctx-size). A 14B model at Q4_K_M uses ~8–9 GB for weights. A 2K context window adds ~0.5–1 GB. A 4K context adds ~1–2 GB. On the RTX 3060 12GB, this leaves 1–3 GB of breathing room at moderate context lengths — workable but not spacious.

Bandwidth Comparison: GDDR7 vs GDDR6

The RTX 5060 8GB's bandwidth advantage is real and it matters for inference on models that fit. GDDR7 at ~448 GB/s versus GDDR6 at ~360 GB/s is a 25% improvement.

LLM token generation is memory-bandwidth-bound. Every forward pass reads the model weights from VRAM into compute units. Faster VRAM means more reads per second, which means more tokens per second — roughly proportionally for models fully loaded into VRAM. This is why the RTX 5060 produces 55–60 tok/s on 7B models while the RTX 3060 produces 42–48 tok/s on the same model.

Tip

Bandwidth advantage scales linearly on models that fit. At 7B, a 25% bandwidth lead gives you approximately 25% more tokens per second. At 14B, the RTX 5060 generates zero tokens per second in VRAM — there is no bandwidth advantage when the model won't load. The question for every purchase decision is always: which models are you actually running?

What limits the RTX 5060's bandwidth advantage is context. As models grow larger and fully utilize modern capabilities (14B, 20B, 32B), 8 GB stops being a configuration choice and starts being a hard architectural limit. The RTX 3060's slower GDDR6 is irrelevant for models the RTX 5060 can't run.

The Upgrade Decision

Who should buy the RTX 5060 8GB

You're running 7B/8B models exclusively — Llama 3.1 8B, Mistral 7B, Gemma 3 9B — and you want maximum tokens per second on those models. You do fast, high-volume inference on small models. You're building a dedicated inference box optimized for speed and lower power draw on a specific workload. You understand the model ceiling and have deliberately chosen to work within 7B for legitimate reasons (latency, power, model quality for your specific task).

For this workload, the RTX 5060 is genuinely the better card. It runs 7B models ~25% faster and draws less power than the RTX 3060 12GB.

Who should buy the RTX 3060 12GB

You want to run 13B or 14B models — Phi-4, Qwen 14B, Llama 3.1 13B — at any context length. You want model flexibility and the ability to upgrade your loaded model without upgrading your GPU. You're building a general-purpose local inference rig. You're buying used and the price-to-VRAM ratio matters more than absolute bandwidth.

The RTX 3060 12GB at $250–280 used/street runs 14B models at a perfectly usable 28–32 tok/s. The RTX 5060 at $349–379 new cannot run those models in VRAM at all.

Warning

Upgrading from an RTX 3060 12GB to an RTX 5060 8GB is a downgrade for local LLM inference. You would gain inference speed on 7B models and permanently lose the ability to run 14B models in VRAM. You'd also be paying more for a card with less capability at the model sizes that matter most in 2026.

The RTX 5060 Ti 16GB is a different conversation

If your budget extends to $459+, the RTX 5060 Ti 16GB changes the calculation entirely. 16 GB of GDDR7 at higher bandwidth than the base RTX 5060 — it runs 14B models fast. The RTX 5060 base card with 8 GB is not that product. Don't conflate the two.

See the RTX 5060 Ti 8GB vs 16GB breakdown for why the Ti's 8GB variant has the same VRAM problem as the base RTX 5060.

Bottom Line

The RTX 5060 8GB is a faster GPU for the workloads it can run. GDDR7 bandwidth is a real improvement and 7B inference speed is measurably better. None of that matters when you hit the 8 GB ceiling.

The RTX 3060 12GB is slower per token and built on older architecture. It runs 14B models at 28–32 tok/s in full VRAM. In 2026, 14B models are where the quality-to-speed ratio peaks for local inference — Phi-4 14B and Qwen 14B at Q4_K_M both require 8–9 GB and both run well on a 12 GB card.

For a general-purpose local LLM build, buy the RTX 3060 12GB. If you find a used unit at $200–250, it's one of the best value inference cards available.

For the full budget GPU landscape including the Arc B580 and RTX 4060 Ti 16GB, see best GPUs for local LLMs under $400.