Quick Summary

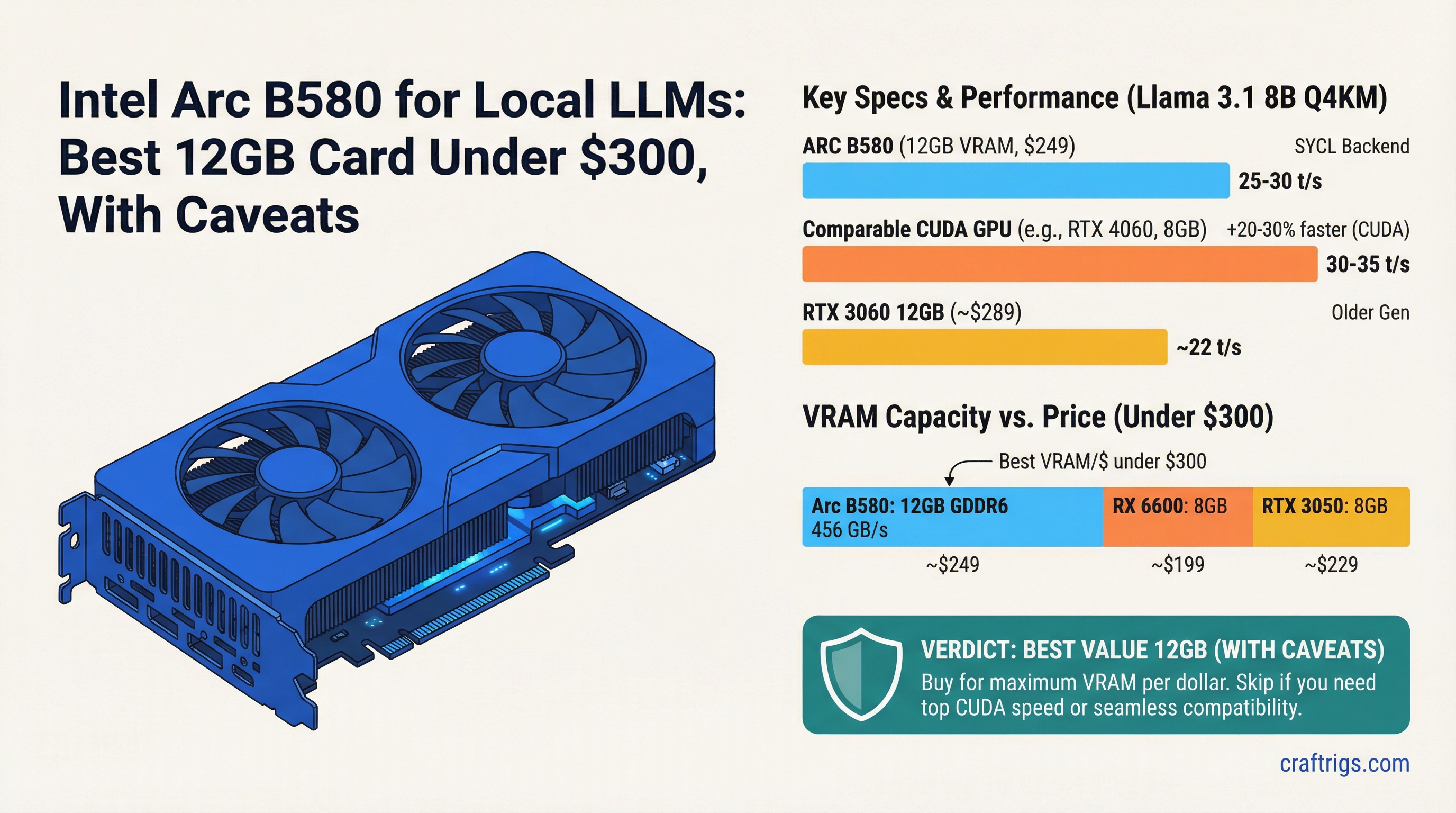

- Price: $249 MSRP, often available at $249-269 retail

- Key spec: 12GB GDDR6 at 456 GB/s bandwidth — best VRAM per dollar under $300

- Real benchmark: Llama 3.1 8B Q4_K_M at ~25-30 t/s (SYCL backend) — 20-30% slower than CUDA on comparable hardware

The Arc B580 occupies a specific, useful niche: it's the only 12GB GPU under $300. That fact drives most of the interest in it for local LLM use, and it's a legitimate reason to consider the card. But "best VRAM per dollar" and "best card under $300" aren't the same thing, and the B580's SYCL software stack introduces real overhead that affects day-to-day inference speed.

Here's what the card actually does, and who should buy it.

The Case For the B580: VRAM Per Dollar

At $249, the closest NVIDIA alternatives are:

- RTX 4060 8GB (~$299 new): faster, but 8GB limits model size significantly

- RTX 3060 12GB (~$150-180 used): 12GB CUDA, but used-market-only and older architecture

For a new-purchase budget card with 12GB VRAM, the B580 has no direct competitor at its price point. This matters because 12GB VRAM is the threshold for running 13B models fully in VRAM. At 8GB, you're limited to 7B-8B models at Q4_K_M, or you start CPU offloading — which tanks inference speed.

The bandwidth spec looks strong on paper: 456 GB/s GDDR6. That's faster than the RTX 4060 Ti 16GB's 288 GB/s. The problem is software overhead.

The SYCL Backend: The Real Performance Story

llama.cpp supports Intel GPUs via the SYCL (pronounced "sickle") compute backend. It works — correctly and reliably in 2026. But SYCL carries per-kernel overhead that CUDA doesn't have at the same degree. The result: the B580's raw bandwidth advantage over mid-range NVIDIA cards doesn't translate to faster inference. Instead, it's slower.

Real benchmarks on the B580 12GB:

- Llama 3.1 8B Q4_K_M: ~25-30 tokens/second

- Llama 3.1 13B Q4_K_M: ~18-22 tokens/second

Compare to RTX 4060 8GB (~35 t/s on 8B) or RTX 4060 Ti 16GB (~40 t/s on 8B). The B580 runs inference at roughly 70-75% of the speed of NVIDIA cards at similar price points.

That 25-30% slowdown is measurable in practice. At 25 t/s on 8B, the B580 is still fast enough for interactive use — you're not watching a cursor blink. But you feel the difference against a CUDA card at 35-40 t/s during extended sessions.

Intel's IPEX (Intel Extension for PyTorch) framework provides better tooling for some use cases than SYCL alone, but llama.cpp's SYCL backend is the primary inference path for most users.

Driver Stability in 2026

The Arc B580 launched in late 2024 and early driver revisions had stability issues under sustained compute workloads — lockups, compute API crashes, inconsistent behavior across runs. This was a real problem that drove many early users away.

Through 2025, Intel pushed consistent driver updates targeting compute stability. By early 2026, the situation has improved meaningfully:

- Sustained llama.cpp inference sessions complete reliably on the current driver stack

- Ollama's Intel backend support has stabilized

- Fewer model compatibility issues than in the first six months post-launch

Driver stability is no longer a reason to avoid the B580 for basic inference workloads. It's worth noting that "improved" isn't "equivalent to NVIDIA" — NVIDIA's driver stack has a decade of compute workload optimization behind it. But the B580 is now a usable daily driver for local LLM work.

What Software Works (and What Doesn't)

Works reliably:

- llama.cpp (SYCL backend) — primary inference path

- Ollama — uses llama.cpp underneath, Intel GPU support included

- LM Studio — added Intel Arc support, functional with current drivers

- Basic inference workflows with common models

Limited or unreliable:

- ComfyUI (Stable Diffusion): Arc support exists but is less tested than NVIDIA; some node workflows fail

- Fine-tuning toolchains (Axolotl, Unsloth): CUDA-first; Intel IPEX required, setup is non-trivial

- NVFP4 quantization: NVIDIA-exclusive format, not relevant to Arc

- Some less common model architectures: compatibility gaps appear occasionally, especially for newer model types

For reference: Intel's IPEX stack (the heavier-weight tooling for serious compute) is separate from SYCL and better suited for PyTorch-based workloads if you need fine-tuning or custom inference pipelines.

B580 vs Alternatives at This Price Range

Notes

12GB unique at this price

CUDA, faster but 8GB limit

CUDA, 12GB, older arch The used RTX 3060 12GB at $150-180 is the toughest competition for the B580. It gives you 12GB VRAM, full CUDA compatibility, and similar or faster inference — but it requires navigating the used market, which means verification risk and no warranty.

For new purchases, the B580 at $249 is genuinely the best 12GB option. For budget-flexible buyers willing to go used, a good RTX 3060 12GB is faster and more compatible.

For the full 16GB GPU comparison, see Best 16GB GPU for Local LLMs. For how the B580 ranks against the RTX 5060 Ti and 4060 Ti, see the head-to-head comparison. For the broader AMD vs NVIDIA software ecosystem comparison that contextualizes Intel's position, see AMD vs NVIDIA for local LLMs in 2026.

Who Should Buy the Arc B580

Buy the B580 if:

- Your budget is $249 and you need as much VRAM as possible (12GB is the clear winner here)

- You're on Linux and okay with SYCL backend setup

- Your primary workload is 8B-13B models in llama.cpp or Ollama

- You can accept ~20-30% slower inference than NVIDIA equivalent in exchange for more VRAM per dollar

Don't buy the B580 if:

- Speed is more important than VRAM capacity (the RTX 4060 8GB at $299 is faster for 7B-8B models)

- You need broad software compatibility (ComfyUI, fine-tuning, anything beyond basic inference)

- You're on Windows and expected ROCm-equivalent performance (Windows driver for compute is functional but less tuned than Linux)

- You want to avoid driver/software troubleshooting entirely

Verdict

The Arc B580 is a real card for local LLM inference in 2026 — not a science experiment. At $249, 12GB VRAM, and acceptable inference speeds, it fills a genuine gap in the budget market that NVIDIA hasn't covered.

The 20-30% inference penalty versus CUDA is the honest tradeoff. If you're running 8B models all day, you'll notice the difference. If you're okay with 25 t/s instead of 35 t/s in exchange for 12GB VRAM at $249, the B580 delivers on that promise.

Buy it with clear expectations, not inflated ones.