The Core Question: Cores Without Cache

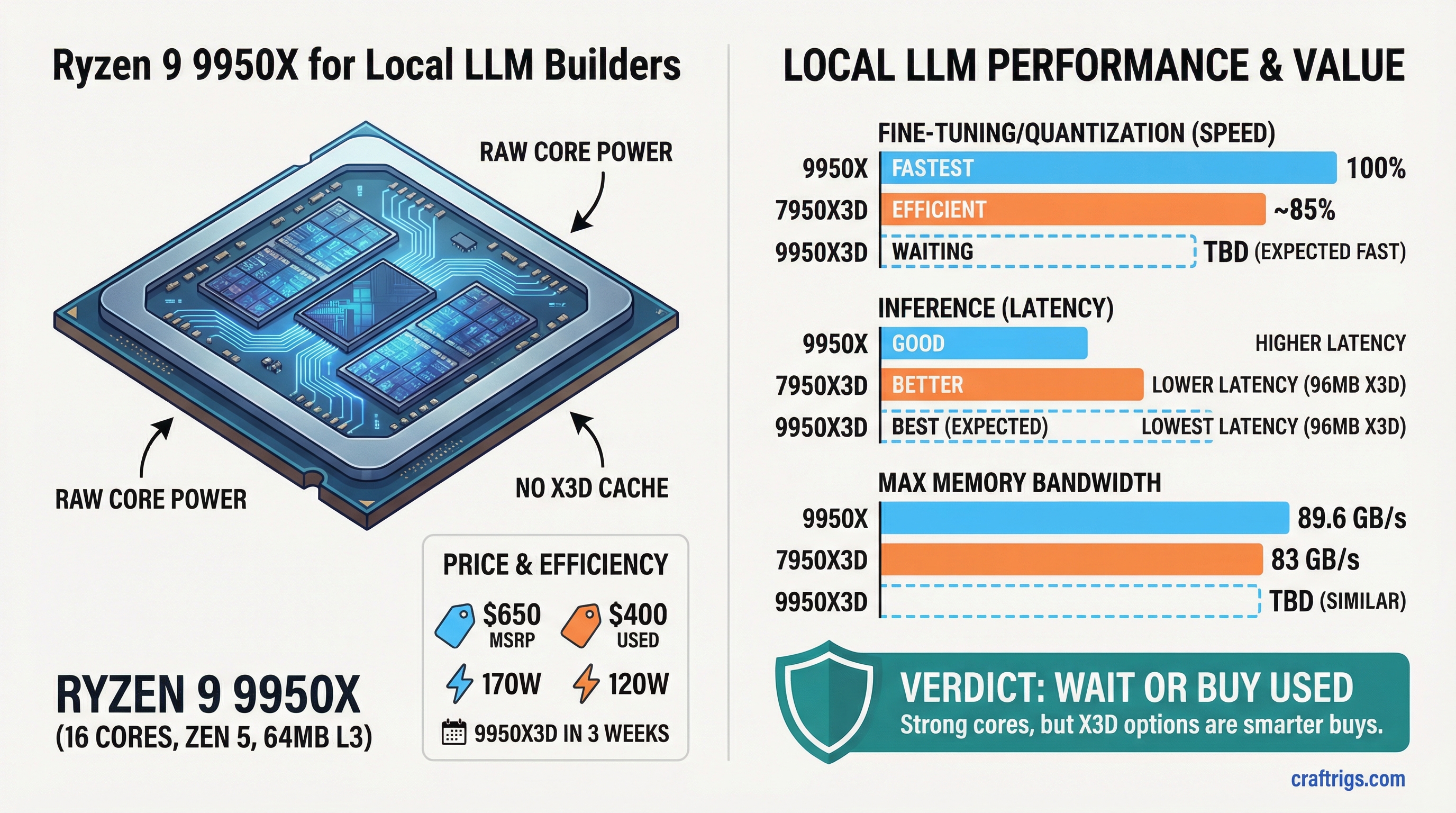

TL;DR: The Ryzen 9 9950X is a solid CPU for power users doing local model fine-tuning and quantization work, but it's caught between a smarter value play (used 7950X3D at $400) and a better X3D variant dropping April 22. Buy the 9950X only if you're running heavy multi-threaded workloads and can't wait. For most AI builders, the 7950X3D used market is the move.

The Ryzen 9 9950X launched in early 2026 with a straightforward pitch: 16 cores, Zen 5 architecture, no X3D cache. AMD's betting that raw parallelism and memory bandwidth beat the specialized cache design of the previous generation. For GPUs serving models, that's academic—the GPU does the work. But for the workloads where CPU still matters (fine-tuning, quantization, KV cache offloading), the 9950X's design choice becomes relevant.

Here's the problem nobody's talking about: AMD launched the 9950X3D the same quarter, dropping April 22, 2026. That CPU has the X3D cache you expected, with the same 16 cores. Buying the non-X3D variant right now means you're either going to regret the purchase in 3 weeks or you're committed to a specific workflow where the standard 9950X actually wins.

This review tells you which one.

The Specs: Where the 9950X Actually Differs

Difference

—

7950X3D slightly higher base

9950X runs hotter

7950X3D has 50% more cache

9950X about 8% higher

Compatible motherboards

Used market: $390–480 The physical difference is stark: the 9950X ditches the 3D V-Cache entirely, betting that a 16-core chip with better memory bandwidth will beat a cache-optimized design for the workloads that matter. That's Zen 5 showing its hand—better per-core performance and bandwidth efficiency, less need for the bandwidth-reducing cache hierarchy of earlier Ryzen 9000 designs.

The TDP gap (170W vs 7950X3D's 120W) is real. You'll need adequate cooling and a solid power supply. The 9950X pulls serious current under load.

Where the 9950X Actually Wins: Multi-Threaded LLM Work

The premise of this review hinges on one question: is there a real-world local LLM workflow where the 9950X beats the 7950X3D enough to justify the $170–250 premium?

The answer is yes, but it's narrow.

Fine-tuning with LoRA: If you're running Hugging Face transformers training loops with 8+ workers, the 9950X's better memory bandwidth helps. Quantization-aware training on a CPU-side coordinate descent loop benefits from the 16 cores at full saturation. Most builders aren't doing this—LoRA runs on GPU or in local vLLM instances. But if you are, you'll see faster epoch times on the 9950X.

Model Quantization at Scale: Converting a 70B model from FP16 to GGUF (llama.cpp format) is a multi-threaded, bandwidth-heavy task. The 9950X's memory bandwidth advantage means slightly faster conversion—maybe 5–15% depending on the model. If you're quantizing 3–4 models per week, that's real time saved. For most builders (quantize once, use the result forever), it's irrelevant.

KV Cache Offloading on CPU RAM: Some inference rigs keep token context on CPU (DDR5 RAM) and offload embeddings to GPU for serving speed. The 9950X's memory bandwidth helps here—you're moving more data per clock between CPU and GPU. With a fast GPU like an H100 or dual-RTX 5090s, this can reduce per-token latency by a couple milliseconds. Again, only relevant if you're running inference-as-a-service locally.

For 90% of CraftRigs readers (single GPU, Ollama or vLLM on Linux, no training), the 9950X adds cost with zero performance gain over a $1,200 system build.

Warning

The used 7950X3D at $400 runs cooler (120W vs 170W), costs less, and handles everything except the three workflows above. If you're not actively doing one of those tasks, buy the 7950X3D used and pocket $250.

Head-to-Head: 9950X vs 7950X3D vs the Incoming 9950X3D

The 7950X3D was released in 2023 and has dominated the "best CPU for local AI" conversation for two years. Here's why:

- Cache advantage on single-thread work: 96 MB of X3D cache crushes the 9950X's 64 MB when running CPU-only inference on small models (Llama 3.1 8B quantized).

- Power efficiency: 120W TDP vs 170W on the 9950X means lower cooling costs and faster thermal throttling avoidance.

- Price: Used 7950X3D at $390–480 (as of April 2026) beats the 9950X's $650 MSRP by a mile.

The 9950X is faster at throughput-limited tasks (multi-threaded batch processing). The 7950X3D is faster at latency-sensitive tasks (single-token generation, cache hits). For local LLM work, latency is almost always the constraint.

Now, the 9950X3D (arriving April 22, 2026) combines 16 cores + 96 MB X3D cache. That's the product the 9950X should've been. When it launches, the non-X3D 9950X becomes a transitional product—you're either buying it at a discount or waiting 3 weeks for the better version.

Recommendation: If you're buying a CPU today (April 4, 2026), grab a used 7950X3D for $400. If you can wait until April 22, the 9950X3D will be the superior play, though probably at $650–700. The standard 9950X is the "bridge" product—not bad, but surrounded by better options.

When the 9950X Actually Makes Sense

Stop me if you're one of these:

Professional quantization pipeline: You convert models for clients, running 2–4 per week. The 9950X's bandwidth edge saves hours per month. Buy it.

Fine-tuning workstation: You're running LoRA training with 16-worker data pipelines on Llama or Mistral models, and you need to saturate all cores. The 9950X beats the 7950X3D noticeably. Buy it.

Hybrid CPU/GPU serving infrastructure: You're running a production local LLM service on your own hardware, offloading KV caches to CPU to burst token throughput. The 9950X's bandwidth advantage helps. Buy it.

Budget isn't tight: You want the latest Zen 5 architecture and prefer new hardware to used. The 9950X is a solid CPU, even if not optimized for your specific task. Buy it without overthinking.

Everyone else: Use that $650 for a better GPU, get the 7950X3D used for $400, or wait for the 9950X3D pricing on April 22. The 9950X isn't a bad CPU—it's just not the right one for most local LLM builders right now.

Verdict: Smart Money vs Cutting Edge

The Ryzen 9 9950X is a technically sound CPU that lands between two smarter choices. It's faster than the 7950X3D at multi-threaded workloads but costs $170–250 more. The 9950X3D is dropping in 3 weeks with the same core count plus better cache, making the standard 9950X look like the bridesmaid at its own wedding.

Buy the 9950X if:

- You're doing heavy multi-threaded LLM work (fine-tuning, quantization) today

- You want Zen 5 architecture and can't wait for 9950X3D pricing

- Budget isn't a constraint

Skip it if:

- You're doing pure inference (get the 7950X3D used for $400)

- You're okay waiting 3 weeks (9950X3D drops April 22)

- Your total system budget is under $2,500 (use that money on GPU instead)

Smart move right now: grab a used 7950X3D for your rig, pocket $250, and deploy it. The performance gap for inference is negligible, and the 7950X3D's lower power draw is a genuine win. The 9950X is the better CPU, but the 7950X3D is the better buy.

FAQ

Is the Ryzen 9 9950X good for running local LLMs?

The 9950X excels at multi-threaded preparation tasks like fine-tuning and quantization, not at serving inference. The GPU does the heavy lifting of model execution. The CPU helps with data loading, quantization, and KV cache management. If you're purely doing inference with a GPU, a cheaper CPU is fine—save the $650 for a faster GPU.

Should I buy the 9950X or wait for the 9950X3D?

The 9950X3D launches April 22, 2026, combining the 16 cores with 96 MB of X3D cache. For inference-heavy loads, the X3D variant will likely be 5–10% faster. If you're doing heavy multi-threaded fine-tuning, the standard 9950X's memory bandwidth might actually be more relevant. Waiting 3 weeks to compare real benchmarks is the prudent move.

Is the 9950X worth $650 when a used 7950X3D is $400?

For fine-tuning and quantization, the 9950X's 16 cores and better memory bandwidth provide a real edge over the 7950X3D. But the $250 price gap is material. If your workload is inference serving on GPU, the 7950X3D is objectively better value. Only buy the 9950X if you're quantizing multiple models per week or running LoRA training regularly.

What's the difference between the 9950X and 7950X3D for local AI work?

The 9950X trades X3D cache for higher memory bandwidth and marginal per-core performance gains (Zen 5 architecture). The 7950X3D is faster at single-threaded work and shines with cached data. For inference, the 7950X3D is faster. For batch multi-threaded work, the 9950X is faster. For most builders, the 7950X3D's power efficiency and lower cost make it the smarter choice.