Prediction markets put the odds at 76% that Gemma 4 ships before April. That's not a rumor — that's Manifold Markets, where people put real money behind forecasts. And two weeks ago, a Google bot account opened a pull request on the Gemma GitHub repo with "Gemma4" in the title. The same thing happened just before Gemma 3 dropped in March 2025.

So if you're running local models and you haven't thought about whether your hardware is ready, now is a good time to do that.

This isn't about whether Gemma 4 will be good. It will be — each Gemma generation has been meaningfully better than the last, and Gemma 3's 27B model already beats Google's own Gemini 1.5 Pro on benchmarks while running on a single consumer GPU. The question is whether your rig will actually handle what comes next.

Why This Generation Matters More Than the Last

Gemma 3 changed the math for local AI. The 4B model outperformed the previous-generation 27B. A 27B model that used to require server-grade hardware became something you could run on an RTX 3090 at home. That kind of efficiency jump is what happens when Google applies distillation training from its Gemini pipeline to a smaller open model.

Gemma 4 will almost certainly do the same thing again — squeeze more out of fewer parameters, add more context, and likely push multimodal capability further into the base variants.

What that means practically: if Gemma 3 is running comfortably on your current hardware, you're probably fine for the entry-level Gemma 4 variants. But if you've been eyeing a hardware upgrade anyway, timing it around this release isn't a bad idea.

The Release Timeline Reality

Gemma 1 launched February 2024. Gemma 2 followed four months later in June. Gemma 3 arrived nine months after that, in March 2025. The trend is longer gaps with bigger jumps.

If that pattern holds — and Google's Hugging Face activity suggests it's about to — Gemma 4 lands somewhere in the March to July 2026 window. The updated collection metadata on Hugging Face earlier this month matches exactly what happened the week before Gemma 3 went public.

The Manifold market gives 75% probability before July and 85% before 2027. The concentration of signal in Q1-Q2 2026 is real.

What Sizes to Expect

Nobody outside Google knows the exact lineup yet. But there are strong signals. A December 2025 post citing early Hugging Face metadata listed parameter ranges of 2B to 8B for the small variants — built on the same architecture as Gemini 3 Flash. That tracks with the pattern of Google releasing lightweight-first and adding larger variants through the first few months.

Based on Gemma's history, the most likely lineup:

- Gemma 4 1B — the phone-tier model, probably replacing Gemma 3n for edge use cases

- Gemma 4 4B — the sweet spot for consumer GPUs

- Gemma 4 12B — the step-up for RTX 4090 and M-series Mac users

- Gemma 4 27B — the single-GPU flagship

- Possible Gemma 4 40B+ — if Google is feeling ambitious, this is where the new GPU generation gets stress-tested

There may also be a Gemma 4n variant for on-device use, continuing the mobile-first thread that Gemma 3n started in June 2025.

[!INFO] Gemma 3n used a "MatFormer" architecture and Per-Layer Embedding (PLE) caching to dramatically cut memory needs on mobile. If Gemma 4 carries this forward, phones with 6-8GB RAM may run larger effective models than the parameter count suggests.

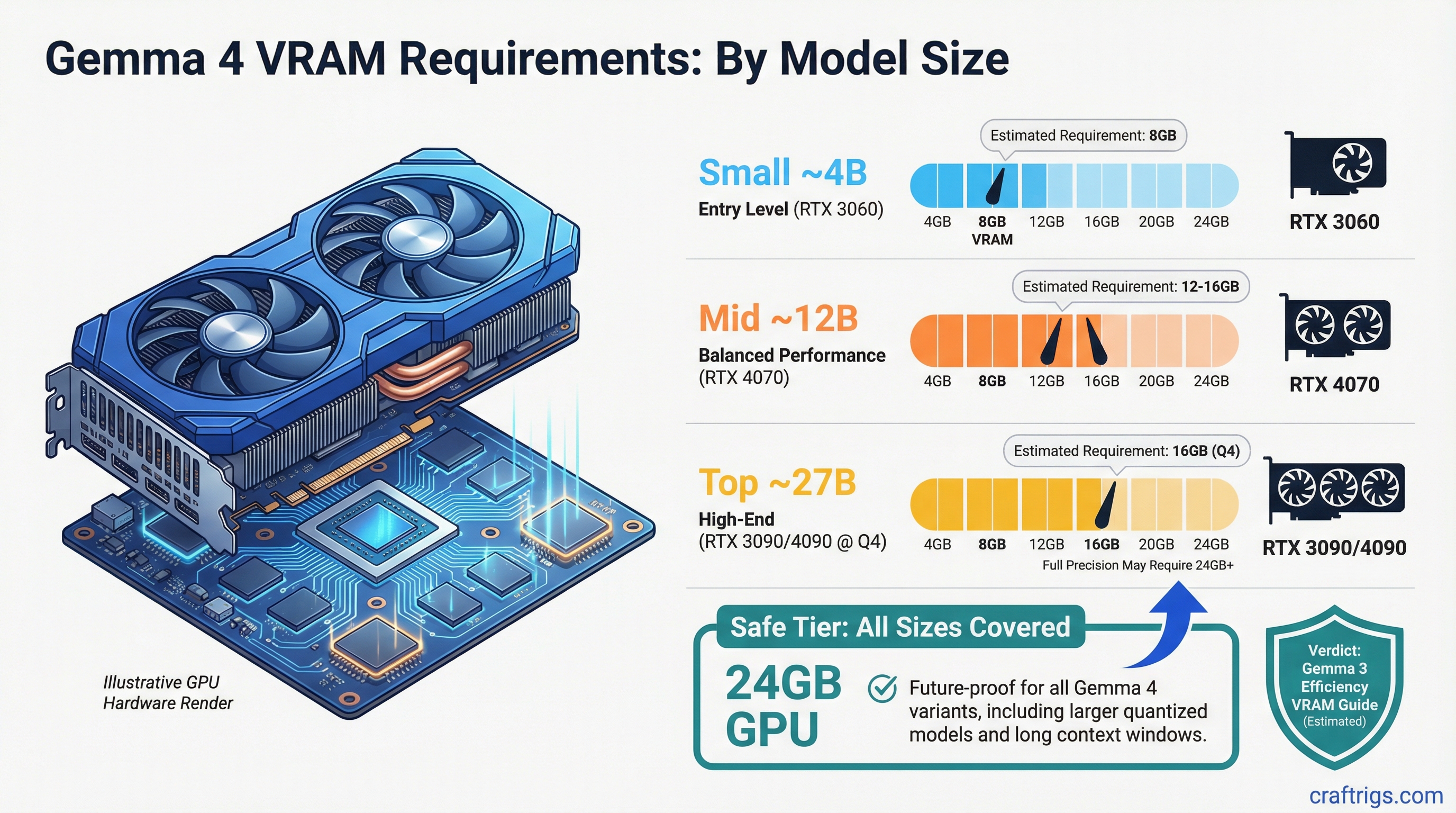

VRAM Requirements by Model Size

Here's where the actual hardware decision lives. These estimates are based on Gemma 3's real-world numbers, adjusted for expected efficiency improvements in the next generation. They're projections, not confirmed specs — treat them as planning targets, not guarantees.

Gemma 4 1B (estimated)

- Q4 quantization: ~2-3 GB VRAM

- Q8: ~3-4 GB VRAM

- Minimum GPU: anything with 4GB or more. Even integrated graphics can handle this at reduced speed.

Gemma 4 4B (estimated)

- Q4: ~4-5 GB VRAM

- Q8: ~7-8 GB VRAM

- Minimum GPU: RTX 3060 (12GB), RX 7700 XT, or any 8GB card for Q4

Gemma 4 12B (estimated)

- Q4: ~8-10 GB VRAM

- Q8: ~13-15 GB VRAM

- Minimum GPU: RTX 4070 Ti (12GB), RX 7900 XT (20GB), or Mac with 16GB unified memory

Gemma 4 27B (estimated)

- Q4: ~16-18 GB VRAM

- Q8: ~28-32 GB VRAM

- Minimum GPU for full Q4: RTX 3090 (24GB), RTX 4090 (24GB), RTX 5090 (32GB)

- For full Q8: RTX 5090 at 32GB or dual-GPU setup

Gemma 4 40B+ (speculative)

- Q4: ~24-26 GB VRAM

- Q8: ~40+ GB VRAM

- Minimum: RTX 5090 for Q4, dual-GPU or workstation class for Q8

Warning

The 27B model at Q8 will likely exceed the RTX 4090's 24GB VRAM. If you're planning to run unquantized or high-precision inference on the flagship model, the RTX 5090's 32GB becomes relevant — not just a luxury. Offloading to RAM kills throughput by roughly 5-10x.

The GPU Landscape Right Now

The timing of Gemma 4 overlaps with the RTX 5090 being newly available and the RTX 4090 hitting its lowest-ever pricing as the 50-series pushes older inventory down.

RTX 5090 — 32GB GDDR7, 1,792 GB/s memory bandwidth, ~$1,999. In benchmarks against Llama 70B via Ollama, it hits around 85 tokens/second compared to ~52 on the 4090. The bandwidth jump matters more than the CUDA count for inference workloads. The extra 8GB over the 4090 is the actual selling point for running 27B models at higher quantizations.

RTX 4090 — 24GB GDDR6X, still the best price-per-VRAM on the consumer market right now. Handles Gemma 3 27B at Q4 without complaint. Probably handles Gemma 4 27B at Q4 too, assuming Google doesn't wildly inflate the parameter count. If you already own one, you don't have a hardware problem.

RTX 3090 — 24GB, slower bandwidth than the 4090 at 936 GB/s vs 1,008 GB/s, but dramatically cheaper used (~$400-600 depending on market). If you want 27B-class models and have a tight budget, this is still the working answer. The performance gap to a 4090 is real but not crippling for inference.

Apple Silicon — The M4 Max with 128GB unified memory is genuinely interesting for people who want to run 40B+ models without a multi-GPU setup. Memory bandwidth sits at 546 GB/s, which is lower than the 5090, but the ability to keep an entire large model in memory without offloading means sustained throughput stays higher than you'd expect. The tradeoff is no CUDA — you're on Apple's Metal stack, using llama.cpp, Ollama, or MLX.

Tip

If you're on a Mac with 36GB+ unified memory, wait and see what the Gemma 4 12B actually needs before spending anything on GPU hardware. Apple Silicon is consistently underrated for running 12B-class models, and the 12B will likely be the most-used variant anyway.

The Quantization Play

Most people running local models are not using full precision. Q4_K_M quantization — the default in Ollama — cuts memory requirements by roughly 55-60% compared to bfloat16. The quality tradeoff at Q4 is minor for instruction-following tasks and more noticeable for complex reasoning.

Q8 quantization is close enough to full precision that it's worth targeting for the 12B and smaller variants, where you have the VRAM headroom. For 27B+, Q4 or Q5 is the practical choice on consumer hardware.

The format matters too. GGUF (used by llama.cpp, Ollama, LM Studio) gives you much more granular quantization control than anything you'd get out of a PyTorch checkpoint directly. When Gemma 4 drops, community GGUF uploads on Hugging Face will typically appear within 24-48 hours of the official weights release.

What to Actually Do Right Now

If you're running an 8GB GPU, you're fine for the 4B model — that's probably not changing. But 8GB caps you out fast. An RTX 4070 Ti (12GB) or 4070 Ti Super (16GB) is a reasonable middle step that handles the 12B without drama.

If you have a 24GB card already, don't upgrade. The 5090 is a genuine improvement but not a mandatory one unless you specifically need to run the 27B at Q8 or larger models without VRAM offloading.

If you're building a new rig from scratch specifically for Gemma 4 and models of that generation, the RTX 5090 is the answer. Yes, it's expensive. But the VRAM jump from 24GB to 32GB is the relevant number, not the compute gain.

And if you're on a Mac with 24GB or more of unified memory — sit tight. You're in better shape than most of the PC crowd for the 12B variant, and the Apple ecosystem support for Gemma models has been solid since Gemma 3 launched.

Gemma 4 is close. The signal-to-noise ratio on these pre-release indicators is unusually high right now. Pre-position your hardware decisions before the launch day articles hit and the secondary GPU market reacts.