The 16GB Tier Is Suffocating — And It's Not an Accident

The RTX 5060 Ti 16GB is being systematically squeezed out of production, not because it's a bad card, but because it's the wrong margin play. ASUS hasn't officially killed it — the company denies discontinuation — but production has cratered. Stock at Newegg, Amazon, and B&H has been sparse since March 2026, with retail pricing creeping above MSRP when it does show up. Meanwhile, the 8GB variant and its bigger sibling, the RTX 5070 Ti 16GB, maintain steady supply. This isn't a bottleneck. It's deliberate product management.

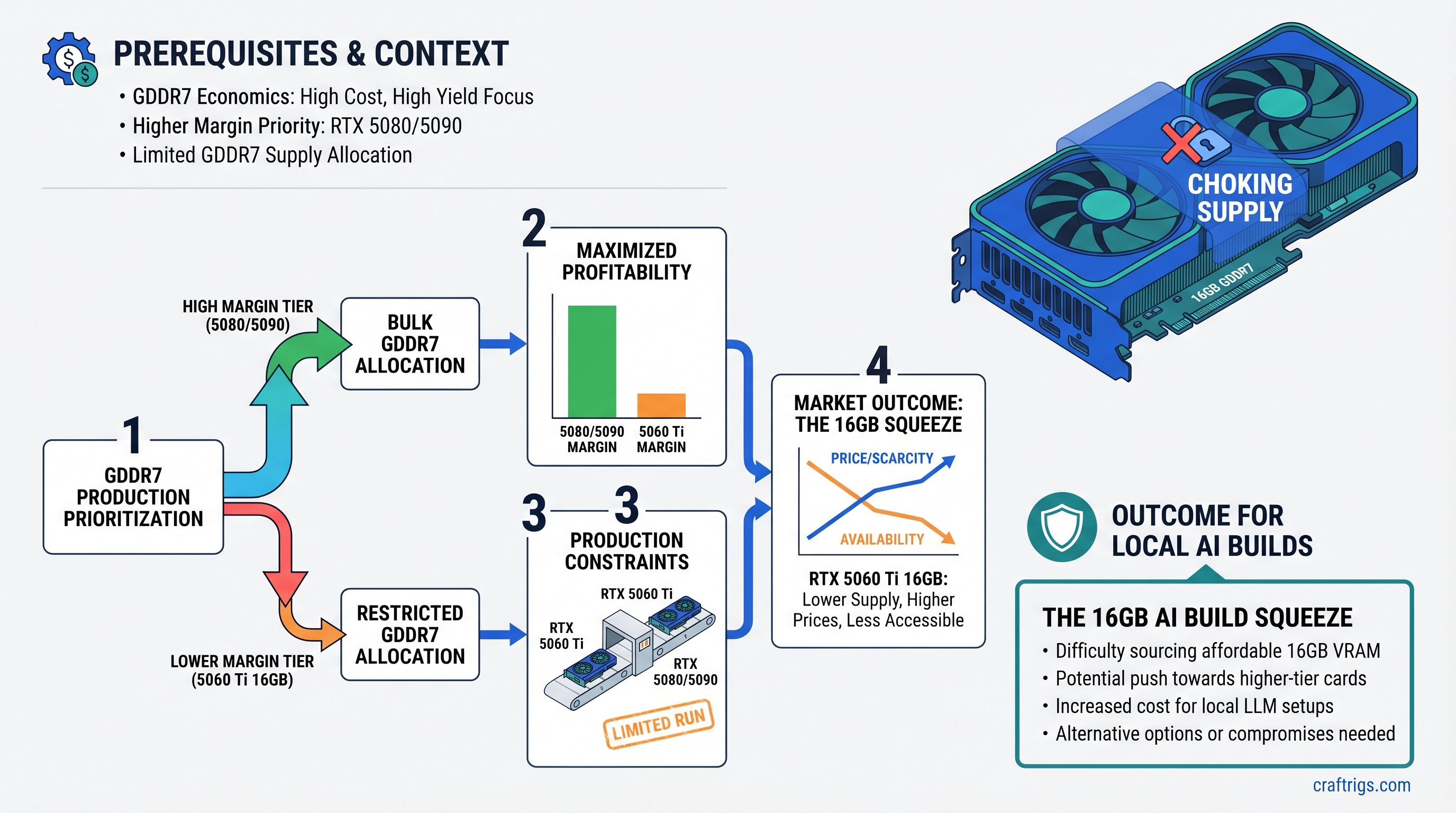

The villain isn't a shortage of GDDR7 chips. The villain is arithmetic.

In January 2026, Gigabyte's CEO disclosed the economics that explain what's happening: gross revenue per gigabyte of GDDR7 is higher when manufacturers and suppliers build two 8GB cards than when they build one 16GB card. The same silicon, the same total VRAM, but a different bottom line. And the supply chain optimizes for the bottom line.

What's Replacing It

The RTX 5060 Ti product line now has a gap you can drive a truck through.

Entry tier: RTX 5060 Ti 8GB at $379 MSRP. It handles 8B and 13B models at full quality; 30B requires painful compression.

Next step: RTX 5070 Ti 16GB at $749. That's a $370 price jump for one additional tier.

No 16GB option exists between them. No 8GB options above entry-level gaming. The 16GB middle tier — the sweet spot for Llama 3.1 13B through Qwen 32B Q4 — has been erased.

GDDR7 Economics: Why Margin Per Gigabyte Beats Everything Else

Here's how the calculus works, and why one discontinued product reveals how the entire supply chain thinks.

GDDR7 is expensive. It's faster than GDDR6, power-efficient, and enables higher clocks — but manufacturing a GDDR7 module costs more per gigabyte than GDDR6 ever did. The industry estimates GDDR7 carries roughly a 20–40% cost-per-gigabyte premium over GDDR6.

When you have a scarce, expensive resource, you don't allocate it to maximize customer choice. You allocate it to maximize revenue per unit of resource consumed.

Gigabyte's CEO statement was clear on this point: revenue-per-gigabyte determines which GPUs get priority allocation. The RTX 5060 Ti 16GB generates the lowest revenue-per-gigabyte of any RTX 50-series card because it's the lowest-priced 16GB option. It's a high-volume, low-margin product — the exact opposite of what supply chains prioritize when resources are tight.

Compare that to two RTX 5060 Ti 8GB cards:

- Two 8GB cards: 16GB total VRAM, two separate SKU sales, two separate margin opportunities, double the per-unit revenue even if cost-per-GB is identical.

- One 16GB card: 16GB total VRAM, one SKU, one margin event, half the revenue extraction.

NVIDIA wins by pushing buyers away from single 16GB cards and toward either dual-card builds or stepping up to a more expensive tier. The supply chain wins because fewer high-margin SKUs are easier to manage than many low-margin ones.

The GDDR7 Reallocation

Where are the freed GDDR7 packages going?

Primarily into RTX 5070 (12GB/16GB) and RTX 5060 Ti 8GB volume production — the cards that generate acceptable revenue-per-gigabyte. The 16GB tier simply doesn't make the cut. Freed allocations aren't being hoarded; they're being redirected to products with better unit economics.

Where NVIDIA Is Herding Demand Instead

This isn't passive scarcity. This is active steering.

The RTX 5070 Ti 16GB ($749) is now the "16GB sweet spot" — except it's at mid-premium pricing, not mid-range. If you wanted 16GB at the 5060 Ti price tier ($429), you're out of luck.

The RTX 5060 Ti 8GB ($379) gets pushed into an entry-gaming role, when its real purpose was mid-range AI. It can't comfortably handle the models that made the 16GB version useful.

The used GPU market absorbs what new products no longer serve. A used RTX 3090 24GB currently trades for around $1,063 as of April 2026 — not the $450–$550 range the outline initially suggested. That's expensive, but it's also better-specified than any current new option below $749. You get 24GB, not 16GB, and you skip the $749 entry fee for the RTX 5070 Ti.

This is the real story: NVIDIA's product ladder has been restructured to eliminate the 16GB mid-range tier entirely. Buyers either accept 8GB limits or jump to premium tiers or the used market.

What This Means for Budget Builders (Three Actual Options)

You need 16GB VRAM. You're not going to wait two years for supply to normalize. What do you actually do?

Option 1: Hunt for RTX 5060 Ti 16GB remaining stock. If you find it, buy it immediately. Expect to pay $449–$549 (above MSRP due to scarcity). This is the best current option if stock exists in your region, but don't count on finding it.

Option 2: Accept RTX 5060 Ti 8GB ($379) and work within its constraints. It runs:

- Llama 3.1 8B (full precision, no issues)

- Qwen 14B Q4 quantization (tight but functional)

- Mistral 7B (full, comfortable)

- Llama 3.1 13B Q5 (on the edge, occasional stuttering)

It does not run:

- Llama 3.1 13B Q4 (needs ~8.5–10GB, you have 8GB)

- Qwen 32B at any quantization

- Larger 30B+ models even Q4_K_M

If your workload is coding assistance or 8B/14B models, this works. If you need 30B, it doesn't.

Option 3: Jump to used market — RTX 3090 24GB. At ~$1,063, it's expensive compared to RTX 5060 Ti MSRP, but it's cheaper than RTX 5070 Ti ($749 new) and comes with 24GB vs 16GB. You get:

- ~87 tokens/second on Llama 3.1 8B (vs ~60 on RTX 5060 Ti 8GB)

- Comfortable handling of 30B models Q4 (vs impossible on 8GB)

- Backwards compatibility with your existing CUDA ecosystem

The catch: NVIDIA doesn't want you to buy used 3090s. But the supply-chain math pushes you there anyway.

What About Dual-Card RTX 5060 Ti 8GB?

Two 8GB cards equal 16GB if you're willing to use distributed inference or NVLink, but this assumes:

- You have the motherboard space (two large 320W GPUs need airflow planning)

- You accept the overhead of NVLink or splitting workloads across cards

- Your software (llama.cpp, vLLM) supports multi-GPU acceleration

It's viable, but it's more complex than one card. For a first-time builder, it's overkill.

Why This Happened: Supply Rationality, Not Demand

The outline's premise is correct but needs one crucial clarification: NVIDIA didn't stop making RTX 5060 Ti 16GB because buyers didn't want it. Demand was steady. This is pure supply-side economics.

GDDR7 is scarce. NVIDIA has finite allocation from memory suppliers. When you allocate a scarce resource, you optimize for revenue-per-unit-of-resource, not customer satisfaction. A 16GB card consumes the same silicon as an 8GB card but generates less revenue, so it loses the allocation fight.

The moment GDDR7 supply becomes abundant (likely 2027), expect NVIDIA to resurrect the 16GB tier. Until then, the middle is gone.

GDDR7 Scarcity Is Real, But the Economics Are What Matter

Yes, GDDR7 production is tight. Chip manufacturers (SK Hynix, Samsung, Micron) are expanding capacity, but it takes time. However — and this is key — the 16GB tier didn't disappear because there's no GDDR7. It disappeared because GDDR7 allocations go to higher-margin products first.

If NVIDIA cared more about customer choice than margin, it could demand that suppliers prioritize 16GB modules. But that's not how supply chains work when resources are tight. Margin drives allocation.

The Uncomfortable Truth: You're Being Sorted

Budget buyers get pushed down to 8GB (the entry tier). Mid-range buyers get pushed up to $749 for RTX 5070 Ti or into the used market. Premium buyers get the new architecture at new-product pricing.

This is intentional. NVIDIA didn't accidentally create a $370 price gap between the RTX 5060 Ti 8GB and RTX 5070 Ti. The supply constraint on 16GB makes that gap feel natural — unavoidable, even. But it's not. It's a feature of the supply-chain optimization, not a bug.

The used RTX 3090 24GB market exists because of this restructuring. Buyers who can't afford the $749 jump but need 16GB+ have to go used. That market is growing, which is exactly what happens when supply chains eliminate the sweet-spot product and force people to compromise up or compromise down.

Key Takeaways

-

RTX 5060 Ti 16GB is supply-starved, not dead — production is constrained, not canceled, but it won't recover until GDDR7 supply loosens.

-

This is margin math, not technical failure — Two 8GB cards generate higher revenue-per-gigabyte than one 16GB card. The supply chain chose accordingly.

-

If you need 16GB right now, your actual options are:

- Buy remaining RTX 5060 Ti 16GB stock (difficult, expensive)

- Jump to used RTX 3090 24GB (~$1,063, better-specified)

- Accept RTX 5060 Ti 8GB ($379, constrained to ≤13B models)

-

The 16GB middle tier won't return until GDDR7 is plentiful — Expect 2027 earliest.

-

Dual-card RTX 5060 Ti 8GB setups are technically possible but complex — NVLink support and distributed inference adds overhead that single-card simplicity avoids.

The uncomfortable reality: NVIDIA's supply-chain decisions are optimizing for shareholder value, not customer experience. When those two goals diverge, supply chain wins. The 16GB tier was caught in the middle.

FAQ

Isn't there a risk buying a $1,063 used GPU?

Yes. Used GPU markets have some DOA/failing units; eBay and HardwareSwap have limited recourse. Buy from established sellers with return policies, not mystery listings. Test immediately upon receipt. A $1,063 purchase with a 7-day return window costs insurance; a blind gamble costs regret.

Will RTX 5070 Ti 16GB GDDR6 versions come back?

No. NVIDIA moved entirely to GDDR7 for this generation. GDDR6 variants would be lower-cost and might revive the 16GB sweet spot, but they'll never ship—the product ladder is locked.

Should I wait for RTX 5050 to have a 16GB version?

Don't count on it. If NVIDIA's margin math says 16GB is unprofitable, they'll structure the entire 50-series line to avoid it—even the entry tier.

Can I run two RTX 5060 Ti 8GB cards without NVLink?

Yes, but with caveats. You can inference on both cards in parallel using tools like vLLM or llama.cpp with multi-GPU support, but they won't share a single model (unless you shard manually). It works, but it's not transparent.