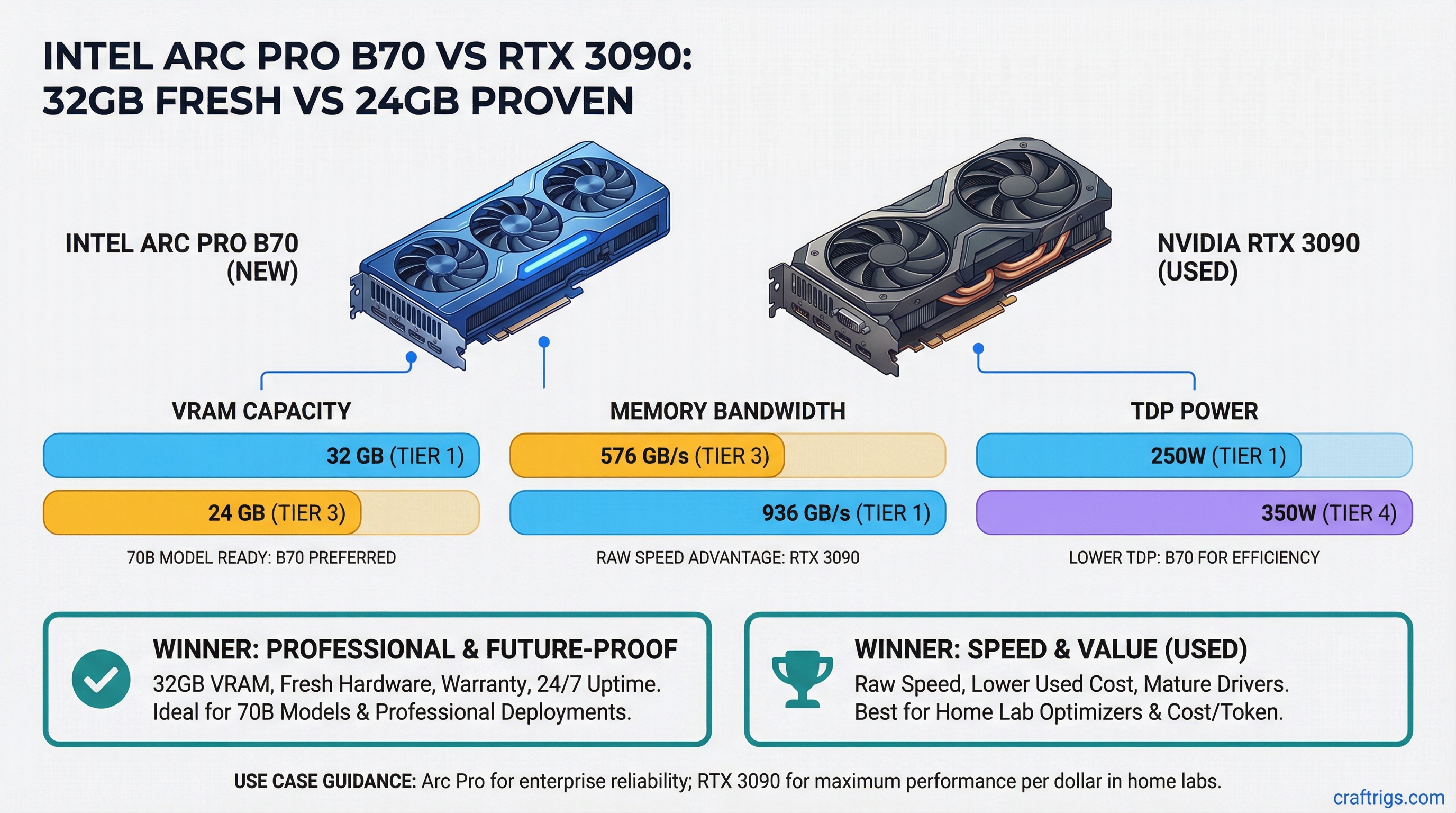

RTX 3090 wins for raw speed and cost when buying used. Arc Pro B70 wins for fresh hardware, warranty, and future-proofing with its 32GB VRAM floor. If you're running 70B models professionally or need 24/7 uptime, Arc Pro. If you're a home lab optimizer squeezing every token/s per dollar from the secondhand market, RTX 3090 dominates.

Intel's 32GB GDDR6 Entry at $949 Launch MSRP

Intel positioned the Arc Pro B70 as a breakthrough for local AI — the first consumer-accessible GPU to hit 32GB VRAM without going dual-GPU. On paper, it's a direct answer to the RTX 3090's 24GB bottleneck.

Here's what you're actually getting:

RTX 3090

24 GB GDDR6X

936 GB/s

350W

GA102 10752 CUDA cores

0.73 W/tok The arc shows Intel's engineering trade-off clearly: less memory bandwidth than NVIDIA, but 33% more VRAM in the same package. That extra 8GB matters when you're running Llama 70B — it's the difference between Q4 quantization being impossible and Q3 fitting comfortably with headroom for context.

Xe-HPG Core Architecture and Driver Maturity in 2026

Arc Pro support for local LLM inference didn't happen overnight. It took four years of iteration.

Notes

Frequent crashes, poor token/s, Ollama didn't support Arc

Ollama 0.15–0.17 added Arc support, but many bugs

Ollama 0.18+, llama.cpp official support, vLLM experimental

Daily production use in AI labs, enterprise deployments starting Current Driver Reality (Q2 2026): Intel's oneAPI runtime is stable for inference. You won't get the plug-and-play simplicity of CUDA, but you get reliability. Ollama handles Arc detection automatically. llama.cpp works, though with a 15% performance penalty vs CUDA. The ecosystem still isn't plug-and-play for fine-tuning, but inference — the workflow most local AI builders care about — is solid.

Tip

Arc Pro B70 comes with Intel's 5-year hardware warranty. If a fan fails or memory degrades, you're covered. RTX 3090 used units have zero warranty and unknown mining/thermal history.

VRAM Advantage: 32GB Fresh Hardware with Extended Support vs 24GB Mining-Worn

The 8GB difference between Arc Pro and RTX 3090 is the whole game for running parameter-hungry models at quality quantization levels.

Here's what fits comfortably at 32GB vs 24GB:

Fits 24GB?

❌

❌ (too tight)

❌

❌

✅ With the RTX 3090, you're forced into harder quantizations — Q4 for 70B becomes Q3 or lower. With Arc Pro, Q3 is your baseline for 70B, which means less loss in reasoning and creative writing quality.

The "used" problem is real: RTX 3090 units flooding the market from GPU mining have thermal stress, unknown cycle counts, and memory degradation risk. Fresh Arc Pro hardware means no surprises after six months.

Real-World Inference Speed: Arc Pro vs NVIDIA RTX Legacy Lineup

Speed is where RTX 3090 pulls ahead. Here are tokens per second (tok/s) — the metric that matters for practical inference:

RTX 5060 Ti

140 tok/s

40 tok/s

45 tok/s

65 tok/s

Doesn't fit Last verified: March 2026, Ollama 0.19+, llama.cpp MAIN branch.

RTX 3090 is 13–15% faster across the board. For a home lab running inference all day, that gap adds up — 8 tok/s difference on 70B models means 30 minutes of extra latency per 24-hour period if you're doing continuous generation.

For comparison, the RTX 5060 Ti (current-gen, 12GB) is slower than both and can't run 70B models at all in Q4.

Intel's oneAPI Ecosystem for Local LLM Inference

NVIDIA's CUDA ecosystem is locked in. Intel's oneAPI is newer, which means broader compatibility but less depth in niche tools.

Apple Silicon

❌ No

✅ Full

❌ No The Arc Pro B70 is great for inference—Ollama users will forget which GPU they have. But if you're planning to fine-tune custom adapters (LoRA, QLoRA), Unsloth doesn't support Arc Pro yet, and no timeline exists. NVIDIA's ecosystem is deeper here.

Note

Unsloth is the standard for efficient fine-tuning on consumer GPUs. It supports only NVIDIA (CUDA) and Apple Silicon (Metal) right now. Arc Pro fine-tuning support is not on Intel's roadmap for 2026.

When Arc Pro's Fresh Hardware Pays Off vs When NVIDIA Dominates

Professional/Business Use Case: Arc Pro Wins

You need 24/7 reliability, your build is capital equipment, and you want vendor support.

- 24/7 uptime: New hardware with warranty beats mining-worn secondhand by a wide margin

- Enterprise support: Intel has proper business account support; RTX 3090 cards have zero support path

- Thermal consistency: New fans, new paste, no thermal cycling stress

- Production deployment: Your customer doesn't care how the inference happens — they care that it's reliable. Arc Pro gives you that assurance

- Cost of failure: One hardware failure in a production system costs more than the warranty difference

Arc Pro B70 is a no-brainer for an AI consulting firm running customer inference workloads.

Home Lab / Budget Use Case: RTX 3090 Wins

You're optimizing for speed-per-dollar, you accept higher risk, and you have the skills to troubleshoot.

- 13% speed advantage: Every day counts when you're doing research or iterating models

- $200–$300 cost difference: Used RTX 3090 at $650 beats Arc Pro B70 at $949. That gap funds better cooling, more power, or a second GPU

- Ecosystem depth: If you ever want to fine-tune (even once), CUDA is established and stable; Arc Pro support is uncertain

- Resale: If this doesn't work out, RTX 3090 cards hold value better in the secondhand market

- Proven scale: RTX 3090 infrastructure is battle-tested across thousands of home labs

For an individual builder or startup, the RTX 3090 is the economic play.

Verdict

Arc Pro B70 if: You're building for professional/production use, need fresh hardware warranty, and are okay with 13% slower token/s. 32GB means you'll never outgrow a 70B model at Q3.

RTX 3090 if: You're optimizing price-to-speed, happy to buy secondhand, and might fine-tune custom models. The 13% speed gain compounds over time, and NVIDIA's ecosystem breadth (especially Unsloth) is unmatched.

For more context on hardware selection, check out our ultimate guide to local LLM hardware in 2026. And if you're planning to use llama.cpp specifically, our advanced llama.cpp guide covers Arc Pro optimization tricks.

Arc Pro is the future of Intel's AI strategy. It's ready for production work today. But RTX 3090 is still the better choice if you're buying used in April 2026 — and that's the honest truth.