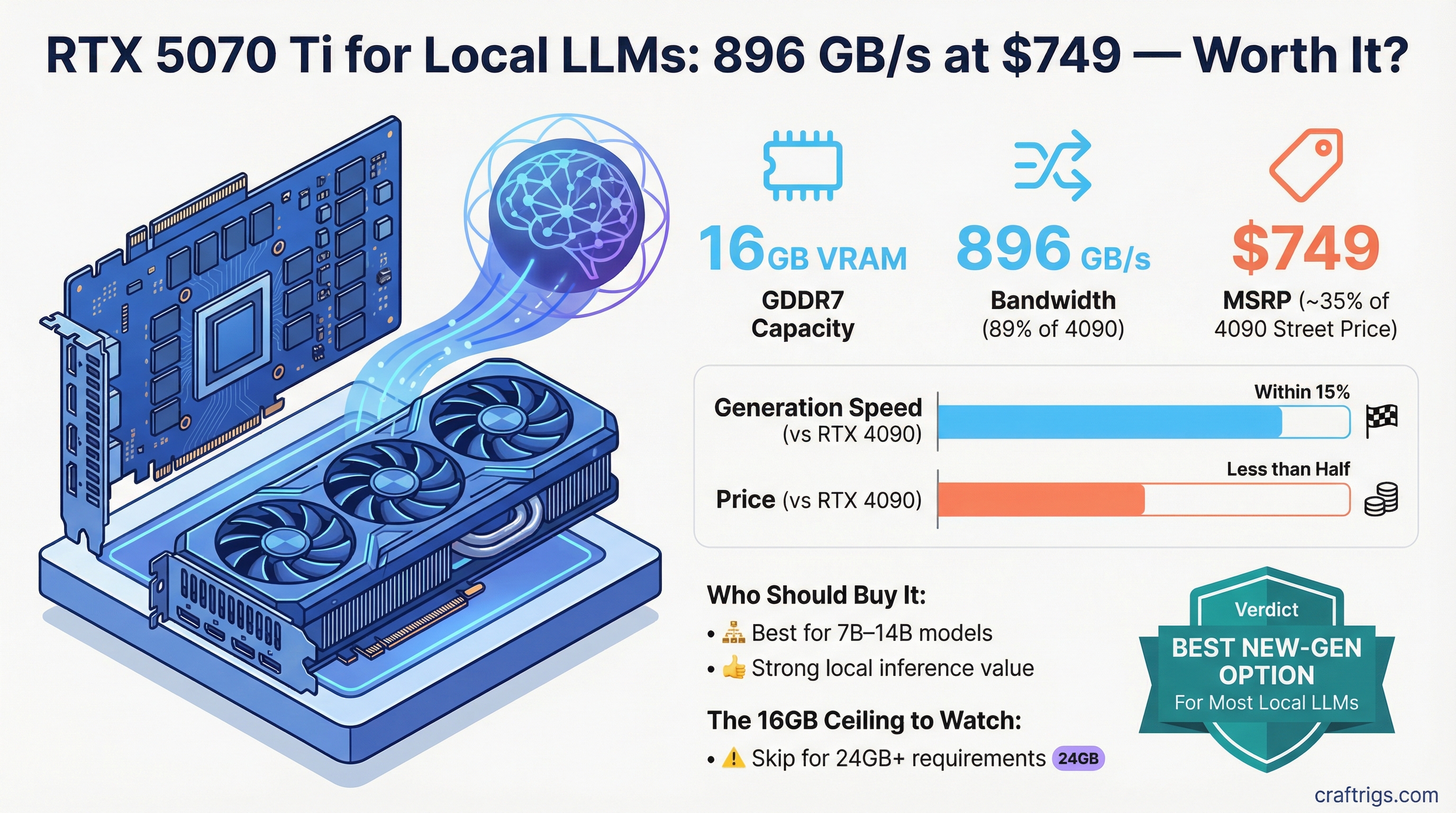

TL;DR: The RTX 5070 Ti is a strong local LLM card at $749 MSRP. 16GB GDDR7 and 896 GB/s bandwidth puts it within 15% of the RTX 4090 on generation speed at less than half the price. It's the best new-generation option for most people running 7B–14B models. Skip it only if you need 24GB+ for larger models.

The RTX 5070 Ti arrived in early 2026 as part of Nvidia's Blackwell 50-series refresh, and it hit at a price point most people can actually afford. The question isn't whether it's fast — it is. The question is whether 16GB GDDR7 and $749 MSRP make more sense than the alternatives.

For local LLM work, the answer is yes for most people. Here's why.

The Specs That Actually Matter

For local AI inference, you care about two numbers above everything else: VRAM capacity and memory bandwidth. Everything else — CUDA cores, TFLOPS, ray tracing — is largely irrelevant to how fast the model generates tokens.

The RTX 5070 Ti's relevant profile:

- VRAM: 16GB GDDR7

- Memory bandwidth: 896 GB/s

- Memory bus: 256-bit

- Architecture: Blackwell GB203-300

- TDP: 300W (peaks around 350W under load)

- MSRP: $749

The bandwidth figure is what stands out. The RTX 4070 Ti Super — the previous mid-high tier card — had 672 GB/s. The 5070 Ti is 33% higher. That translates almost directly into faster token generation, which is the bottleneck for day-to-day AI use.

For context: the RTX 4090 has 1,008 GB/s. The 5070 Ti is at 89% of 4090 bandwidth, at roughly 30-35% of 4090's current street price.

How Fast Is It?

Real-world inference benchmarks on the 5070 Ti with llama.cpp and Ollama:

- Llama 3.1 8B at Q4_K_M: approximately 100–125 tokens per second generation

- Qwen 2.5 14B at Q4_K_M: approximately 55–70 tokens per second generation

- Llama 3.3 70B at Q2 (partial CPU offload): not practical — VRAM isn't enough for single-card use

For comparison, the RTX 4090 pulls around 120–150 t/s on 8B and 80–100 t/s on 14B generation. The 5070 Ti is within 10–20% on generation speed for 8B, which in practice means a 1-2 second difference per response at typical output lengths. Most people won't notice.

The 5070 Ti also has strong prefill speed — processing the input context before generating output. This matters when you're working with long documents, large codebases, or multi-turn conversations. Blackwell's architecture improvements show up most clearly here.

What 16GB Actually Fits

VRAM determines which models you can run fully loaded in memory. With 16GB at Q4_K_M quantization:

- 7B models (Llama 3.1 7B, Mistral 7B): runs easily with room to spare, large context supported

- 8B models (Llama 3.1 8B, Qwen 2.5 7B): comfortable at 4–5GB used

- 14B models (Qwen 2.5 14B, Phi-4): fits at Q4, approximately 9–10GB used

- 27B models (Gemma 3 27B, Mistral Small 3.1): very tight at Q4, need to reduce context window; Q3 recommended

- 32B models: requires Q2–Q3, quality degrades noticeably

- 70B models: requires multi-GPU or heavy CPU offloading — not worth it on this card

The honest summary: 16GB is exactly right for the models most people actually use. The people running 30B+ models at high quality are a smaller subset, and they're buying the 4090 or 5090.

Who Should Buy It

The 5070 Ti makes sense if you:

- Are building a new local AI rig and don't already own a high-VRAM card

- Run 7B–14B models as your primary workload

- Want new-generation performance without paying 4090 or 5090 prices

- Care about efficiency — Blackwell runs cooler per token than Ampere

The 5070 Ti is harder to justify if you:

- Already own an RTX 3090 or 4090 — the upgrade delta doesn't justify the cost

- Regularly run 30B+ models and need 24GB or more for quality quantizations

- Can find a used RTX 3090 at under $750 — 24GB VRAM for less money is a real trade-off worth considering

RTX 5070 Ti vs the Alternatives

vs RTX 3090 (used, ~$750–850): The 3090 has 24GB VRAM and 936 GB/s bandwidth — actually slightly higher than the 5070 Ti. For 24B+ models, the 3090's extra VRAM matters more than the architectural improvements. For 7B–14B work, they're roughly equivalent in speed. The 5070 Ti wins on efficiency and warranty; the 3090 wins on VRAM per dollar.

vs RTX 4090 (used, ~$2,100–2,400): The 4090 has 24GB and 1,008 GB/s. It's genuinely faster and fits larger models, but you're paying 3x the price for ~15% more generation speed and 8GB more VRAM. Unless you specifically need that extra VRAM, the 5070 Ti is the better value.

vs RTX 4060 Ti 16GB (~$400 used): The 4060 Ti has 16GB but only 288 GB/s bandwidth — three times slower than the 5070 Ti on token generation. If you're on a budget, the 4060 Ti runs models fine at slower speeds. The 5070 Ti is a meaningfully better card for anyone who uses AI heavily.

Street Price Reality

MSRP is $749 but street price at launch ran $800–950 due to scalping and stock shortages. If you're paying over $900, the calculus starts shifting toward a used RTX 3090 or 4090.

Check prices at:

- Newegg and B&H Photo for in-stock new cards

- eBay sold listings (not active listings) for real used market prices

- /r/hardwareswap for direct peer-to-peer deals

Wait for inventory to normalize before buying at inflated prices. The 5070 Ti will settle closer to MSRP once the initial launch demand clears.

The Verdict

The RTX 5070 Ti is the best new-generation GPU for local LLM work at the $750 price point. 16GB GDDR7 covers the models most people run, 896 GB/s bandwidth delivers near-4090 generation speed, and Blackwell's efficiency improvements mean lower power draw per token than older cards.

It's not the card for everyone — if you need 24GB for large models, look at the used 3090 or 4090 market. But for someone building a fresh local AI setup focused on 7B–14B models, the 5070 Ti is the right buy right now.