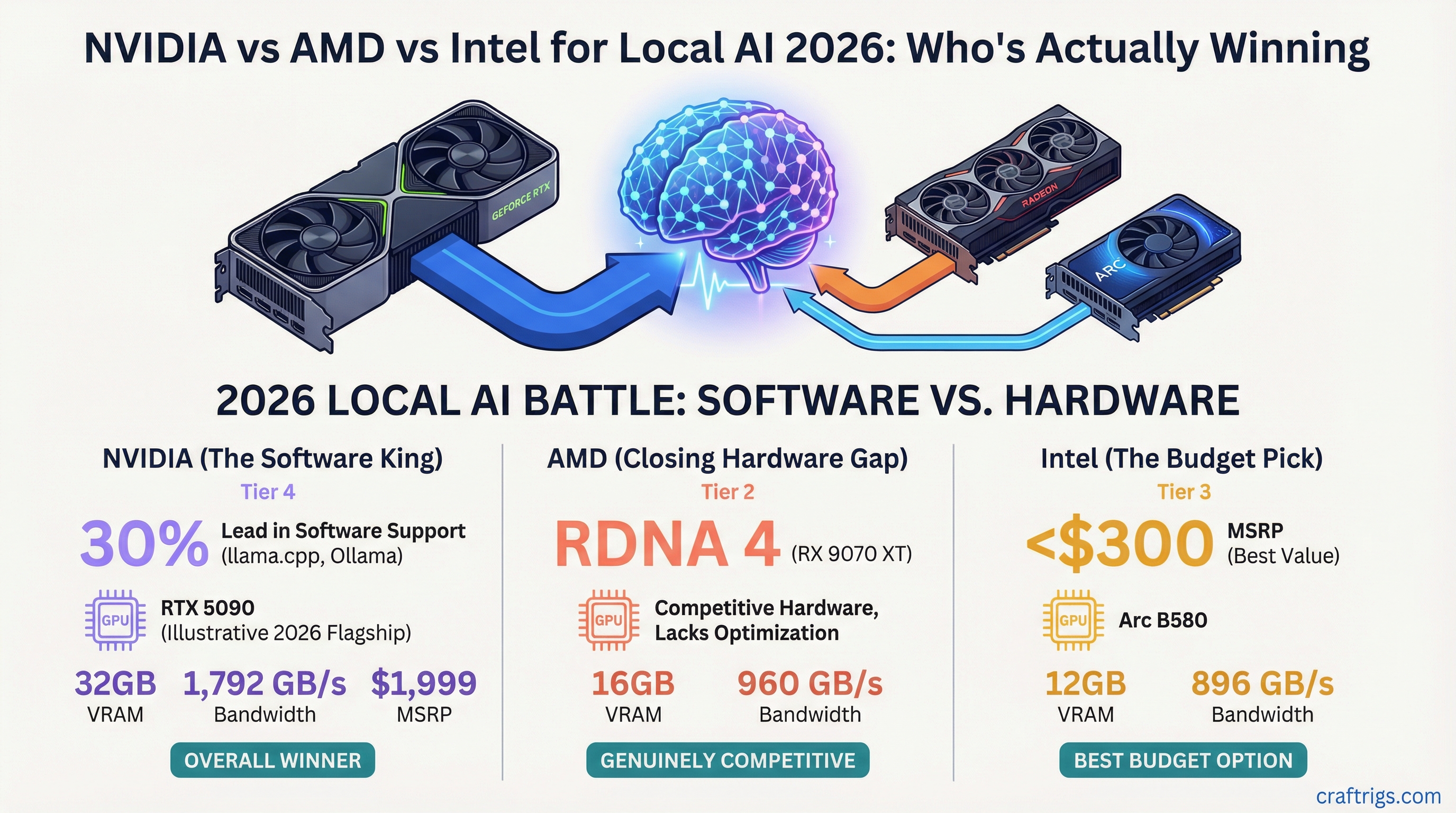

TL;DR: Nvidia still wins for local LLM inference in 2026, mainly because of superior software support in llama.cpp and Ollama. AMD RDNA 4 (RX 9070 XT) is genuinely competitive on hardware specs but falls behind on software optimization. Intel Arc B580 is the best budget option under $300. For most people: buy Nvidia, but don't dismiss AMD if you're comfortable troubleshooting.

Every year someone declares AMD or Intel is finally closing the gap with Nvidia on AI workloads. In 2026, that claim is more accurate than it's been in years — but "more accurate" doesn't mean "equal." Here's where things actually stand.

Why Software Beats Hardware for Local LLMs

Before comparing GPUs, you need to understand why Nvidia has held the lead for so long. It's not purely hardware — it's software infrastructure.

Local LLM inference runs through frameworks like llama.cpp, Ollama, LM Studio, and koboldcpp. These tools have years of CUDA optimization built in. Nvidia's GPU acceleration (CUBLAS, cuDNN, CUDA kernels) is deeply integrated, heavily tested, and continuously updated.

AMD uses ROCm and HIP for GPU compute. Intel uses oneAPI and SYCL. Both are functional, but they're behind in:

- Driver stability across operating systems

- Compatibility with every quantization format

- Community troubleshooting resources and documented fixes

- Flash attention and other inference optimizations

This matters because even if an AMD card has comparable raw specs, it may perform 20–30% below theoretical maximum on certain model formats due to less-optimized inference paths.

That gap is closing. But it hasn't closed.

Nvidia in 2026: The Blackwell Lineup

The RTX 50-series (Blackwell) is Nvidia's current generation:

- RTX 5090: 32GB GDDR7, 1,792 GB/s bandwidth — the fastest consumer card for AI inference, period. $1,999 MSRP, $3,800+ street price at launch.

- RTX 5080: 16GB GDDR7, 960 GB/s — strong all-around card, at MSRP ($999) it's competitive.

- RTX 5070 Ti: 16GB GDDR7, 896 GB/s — best value in the current lineup at $749 MSRP.

- RTX 5070: 12GB GDDR7, 672 GB/s — limited VRAM hurts its appeal for larger models.

For local AI, the standout is the 5070 Ti. The 5090 is the best single-card option if price isn't a constraint, but it's not worth paying $4,000+ at launch scalping prices.

The 40-series (Ampere) is now available used at reduced prices and remains excellent. The RTX 4090's 24GB GDDR6X and 1,008 GB/s bandwidth still handle any model you'd reasonably run locally.

Nvidia advantages:

- Best-in-class software support across all inference frameworks

- Widest compatibility with quantization formats (Q4, Q5, Q6, Q8, F16, F32)

- Most community resources for setup and troubleshooting

- NVLINK support for multi-GPU setups

Nvidia disadvantages:

- Premium pricing at every tier

- RTX 50-series launch stock issues

- Power consumption at the high end (5090 peaks near 600W)

AMD in 2026: RDNA 4 Is the Real Story

AMD's RDNA 4 lineup (RX 9000-series) launched in early 2026 and it's meaningfully better for AI than RDNA 3 was.

The RX 9070 XT is the card to look at:

- VRAM: 16GB GDDR6

- Bandwidth: approximately 640 GB/s

- Price: ~$599–649 MSRP

- Architecture: RDNA 4 with improved AI acceleration blocks

Hardware-wise, the RX 9070 XT is competitive with the RTX 4070 Ti Super. For gaming and general compute, it trades blows at roughly equal price points.

For local AI inference, it's more complicated. ROCm support has improved substantially with RDNA 4, and llama.cpp's HIP backend has gotten more optimization attention in the past year. Real-world token generation on the RX 9070 XT runs approximately 25–30% below an equivalent Nvidia card in the same bandwidth class — the software overhead is the gap.

The RX 9070 (non-XT) has 16GB at ~$499. Same story: good hardware, software overhead is the differentiator.

AMD advantages:

- More VRAM for the price in some configurations

- Strong gaming performance making it a better dual-purpose card

- RDNA 4 AI acceleration is a real improvement over RDNA 3

- ROCm 6.x works well on Linux for inference workloads

AMD disadvantages:

- Windows ROCm support still lags Linux

- Some quantization formats not fully optimized

- Less community troubleshooting documentation

- Certain llama.cpp features CUDA-only

Intel Arc in 2026: The Underdog Play

Intel's Arc B580 (Battlemage) surprised everyone at $249 with 12GB of GDDR6. It runs local LLMs. That fact alone puts it in a different category than Intel's previous AI-adjacent cards.

The B580 specs for AI:

- VRAM: 12GB GDDR6

- Bandwidth: 456 GB/s

- Price: $249–299 new

- Architecture: Battlemage Xe2

Intel's oneAPI and IPEX-LLM have improved dramatically. For basic 7B model inference on Linux, the B580 works. On Windows, it requires more setup but is functional with current drivers.

Practical limits: 12GB VRAM caps you at 7B models comfortably, with some 13B models possible at lower quantizations. Generation speed is slower than equivalent Nvidia bandwidth would suggest, but acceptable at the price point.

The B580 isn't a card for someone who wants to run AI all day professionally. It's a card for someone with a $250 budget who wants to experiment with local models without buying a used entry-level Nvidia card.

Intel advantages:

- Best VRAM-per-dollar under $300, nothing else comes close

- Active improvement trajectory — drivers get better consistently

- Good Linux support through IPEX-LLM

Intel disadvantages:

- Still behind on Windows inference performance

- Limited to smaller model sizes

- Smaller community, fewer documented fixes

- Some inference features not yet supported

The Head-to-Head Summary

For pure local LLM performance per dollar: Nvidia wins at every price tier when you account for software efficiency. The RTX 5070 Ti at $749 outperforms the RX 9070 XT at $649 in real inference speed despite slightly lower raw bandwidth, due to better CUDA optimization.

For budget inference under $300: Intel Arc B580 is the only viable option. Nothing else gives you 12GB VRAM at that price.

For 24GB VRAM without paying 4090 prices: AMD doesn't have a current-gen answer. The used RTX 3090 at $800 is still the best path to 24GB VRAM.

For Linux-primary setups: AMD's gap with Nvidia narrows substantially. ROCm on Linux is solid. If you're already comfortable in a Linux AI environment and want to save money, AMD is a legitimate choice.

For Windows users running Ollama or LM Studio: Stick with Nvidia. The setup friction and occasional compatibility issues with AMD and Intel are real, and Nvidia just works.

The Verdict

Nvidia wins for local AI in 2026. Not by the dominant margin it had in 2022–2023, but it still wins on software support, framework compatibility, and community resources.

AMD RDNA 4 is the best alternative, especially on Linux. Intel Arc is the best budget play. If the Nvidia tax bothers you and you're comfortable with some setup complexity, AMD is now a legitimate option. A year ago it wasn't.

For anyone just getting started with local LLMs: buy Nvidia, follow the standard setup guides, and don't spend time troubleshooting driver issues. Optimize for time, not theoretical hardware value.