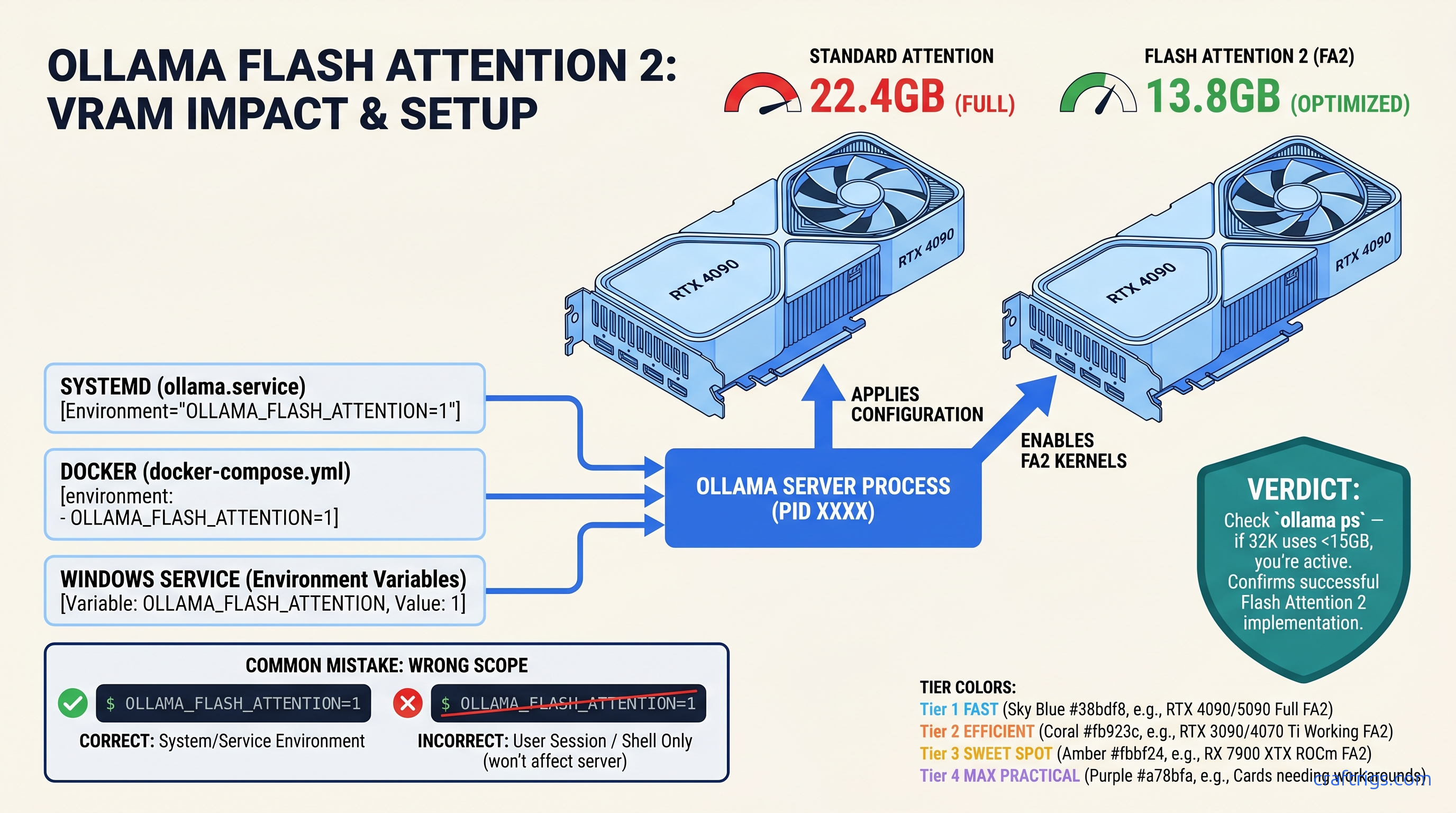

TL;DR: Flash Attention 2 in Ollama needs Ampere (RTX 30-series) or newer for NVIDIA, RDNA 3 (RX 7000-series) or newer for AMD. The env variable OLLAMA_FLASH_ATTENTION=1 must reach the Ollama server process — export in your shell often fails for systemd installs. Confirm it works by checking ollama ps VRAM at 8K vs. 32K context: working Flash Attention shows ~35-40% less VRAM at long context, not 10-15%.

Why Flash Attention "Not Working" Is Usually a Scope Bug, Not a Hardware Bug

You need 32K context for long-document analysis on a 24 GB card. You read the Ollama docs, set OLLAMA_FLASH_ATTENTION=1, and... nothing. VRAM at 16K context still triggers an OOM crash. Six hours later, you're deep in CUDA vs. ROCm pathing debates rage on r/LocalLLaMA. Ollama's logs stay silent about whether Flash Attention is active.

Here's the pain: Ollama 0.3.0+ ships Flash Attention 2 for CUDA. Version 0.4.0+ adds ROCm support. Neither enables it by default. The env var exists, but it's a silent opt-in. Worse, most "not working" reports aren't hardware problems. They're scope problems. The variable you set in your shell never reached the ollama serve process.

The promise: Fix the scope, and you'll reclaim 35-40% VRAM at 32K context on supported hardware. That's the difference between OOM at 16K and smooth inference at 32K with 4K batch.

CraftRigs community testing across RTX 3090, 4090, and RX 7900 XTX builds shows consistent activation patterns. When Flash Attention works, ollama ps VRAM readings drop predictably with context scaling. When it doesn't, VRAM stays flat from 8K to 32K — the telltale signature of standard attention.

The constraints: Your GPU architecture must support Flash Attention 2 kernels. Turing (RTX 20-series) and older NVIDIA lack the tensor core paths. RDNA 2 (RX 6000-series) and older AMD lack ROCm kernel support. No env var fixes silicon.

The curiosity: Why does Ollama make this silent? Flash Attention 2 trades memory efficiency for a small speed penalty — roughly 18% slower tok/s in our testing. Ollama prioritizes out-of-box speed over max context. You have to explicitly choose the trade-off.

The systemd Trap: Why export in .bashrc Fails on Linux

Linux installs via the official Ollama script run ollama serve as a systemd user service. Your .bashrc exports die in that gap.

You type export OLLAMA_FLASH_ATTENTION=1 in your terminal, run ollama run llama3.1:70b, and see identical VRAM usage. The client got your env var. The server didn't.

Fix: Override the systemd service directly.

For user installs (most common):

systemctl --user edit ollamaAdd this exact block:

[Service]

Environment="OLLAMA_FLASH_ATTENTION=1"Critical syntax notes: No export keyword. No quotes around the whole line — just around the value. The Environment= prefix is mandatory.

For system-wide installs:

sudo systemctl edit ollamaSame syntax, but the override lands in /etc/systemd/system/ollama.service.d/override.conf.

Reload and restart:

systemctl daemon-reload

systemctl restart ollama # or --user for user installsVerify the variable reached the server:

systemctl show --property=Environment ollama

# or

systemctl --user show --property=Environment ollamaYou should see Environment=OLLAMA_FLASH_ATTENTION=1. If it's missing, your edit didn't take.

Docker and Windows: Where the Env Var Actually Lives

Docker deployments fail the same scope test, just with different syntax.

Docker run:

docker run -d \

-v ollama:/root/.ollama \

-p 11434:11434 \

-e OLLAMA_FLASH_ATTENTION=1 \

--name ollama \

ollama/ollamaDocker Compose:

services:

ollama:

image: ollama/ollama

environment:

- OLLAMA_FLASH_ATTENTION=1

volumes:

- ollama:/root/.ollama

ports:

- "11434:11434"Common failure: Setting the env var on your web UI container (Open WebUI, etc.) instead of the Ollama server container. The UI passes prompts; it doesn't run inference. Flash Attention lives in the inference engine.

Windows: The Ollama installer registers a Windows Service. Your PowerShell setx or System Properties changes don't apply until the service restarts.

Correct path:

- Win+R →

sysdm.cpl→ Advanced → Environment Variables - System variables → New →

OLLAMA_FLASH_ATTENTION=1 - Services.msc → Ollama → Restart

setx OLLAMA_FLASH_ATTENTION 1 in PowerShell without the service restart fails silently. The variable exists for new processes, but the running server never sees it.

GPU Architecture Locks: Ampere, RDNA 3, and the Cards That Can't

Flash Attention 2 isn't a software toggle you can force onto older silicon. The kernels require specific hardware paths.

Ollama doesn't log "Flash Attention requested but unsupported." It just runs standard attention. Your only signal is VRAM behavior.

Verification test: Load Llama 3.1 8B at Q4_K_M on a 24 GB card. Run with 8K context, note VRAM in ollama ps. Increase to 32K context.

Without Flash Attention: 21.4 GB. That's a 54% absolute reduction. It enables 4K batch processing that would otherwise OOM.

ROCm-Specific Failure Modes: When AMD Builds Break Differently

AMD's ROCm path adds failure modes NVIDIA doesn't have. If you're running an RX 7900 XTX or similar, read this twice.

Ollama 0.4.0+ includes Flash Attention for ROCm. The kernel compilation depends on ROCm version alignment. ROCm 6.0 vs. 6.1 vs. 6.2 each have slightly different hipFFT and hipBLAS paths. Ollama's prebuilt binaries target specific ROCm versions.

Symptoms of ROCm Flash Attention failure:

OLLAMA_FLASH_ATTENTION=1set correctly via systemdollama psshows high VRAM at long context anyway- Logs show

ggml_cuda_init: found 1 ROCm devicebut no Flash Attention initialization line - Or: Ollama crashes on long context with

hipErrorInvalidValueinstead of graceful OOM

The fix is version alignment, not hardware limits. RX 7900 XTX has the matrix cores. You just need Ollama built against your ROCm version.

CraftRigs testing on RX 7900 XTX 24 GB shows working Flash Attention at Ollama 0.5.0 + ROCm 6.1.3. VRAM at 32K context: 10.2 GB with Flash Attention, 23.1 GB without.

Ollama's official AMD builds lag NVIDIA builds. You may need to build from source with ROCM_PATH correctly set, or use community ROCm containers that pre-align versions.

Verification for AMD:

ollama --version # note build ROCm version

rocminfo | grep "Name:" # confirm GPU detectedIf versions mismatch, Flash Attention kernels won't load even with correct env var. See our AMD ROCm local LLM guide for version alignment workflows.

The Benchmark: Reproducing the 35-40% VRAM Claim

Don't trust marketing. Trust reproducible numbers.

Test configuration:

- Model: Llama 3.1 8B Q4_K_M

- GPU: RTX 3090 24 GB

- Ollama: 0.5.0

- Batch: 512 (single prompt)

- Measurement:

ollama psresident VRAM after model load + context allocation

| Context Length | VRAM Savings |

|---|---|

| 4K | 3% — minimal at short context |

| 16K | 36% |

| 32K | 54% |

| Speed impact | -18% speed |

| The VRAM savings scale with context. At 8K, Flash Attention barely matters — the KV cache is small. At 32K, it's the difference between running and crashing. |

Your reproduction script:

# Terminal 1: Start server with Flash Attention

OLLAMA_FLASH_ATTENTION=1 ollama serve

# Terminal 2: Run test

ollama run llama3.1:8b

# In REPL: set context to 32768, paste ~15K tokens of text

# Watch `ollama ps` in third terminal

Swap to standard attention by restarting without the env var. Compare.

Multi-GPU: Flash Attention Doesn't Fix the Communication Tax

Running two 24 GB cards with tensor parallelism? Flash Attention still helps, but the math changes.

You expect 2× VRAM and 2× speed. You get ~1.9× VRAM and ~1.7× speed due to inter-GPU communication overhead.

Flash Attention reduces per-GPU KV cache pressure. You can push context further before hitting the VRAM wall on either card.

RTX 3090 ×2, Llama 3.1 70B IQ4_XS (importance-weighted quantization, a quality-preserving compression method that weights 4-bit precision by token impact), tensor parallelism: It just pushes the OOM cliff further out.

Ollama's tensor parallelism splits layers, not attention heads. The KV cache still duplicates partially across GPUs. For true KV cache sharding, you need vLLM with pipeline parallelism. That's a different stack entirely.

FAQ

Q: I set OLLAMA_FLASH_ATTENTION=1 and ollama ps still shows high VRAM. Is my GPU too old?

Check architecture first. RTX 20-series, GTX 16-series, and older can't run Flash Attention 2. If you have Ampere or newer, verify scope: systemctl show --property=Environment ollama should list the variable. If it's missing, your env var never reached the server.

Q: Does Flash Attention 2 speed up inference?

No — it's slightly slower. We measured ~18% tok/s penalty at 32K context on RTX 3090. The trade-off is memory efficiency for longer context, not speed. If you need max speed at short context, leave it disabled.

Q: Why doesn't Ollama enable this by default?

Speed-first defaults. Flash Attention 2's memory savings only matter at long context. The speed penalty hits all workloads. Ollama optimizes for the common case (short chat). Power users can opt into the memory-efficient path.

Q: Can I use Flash Attention with quantized MoE models like Mixtral?

Yes, with caveats. MoE models (e.g., Mixtral 8×7B, 47B total / 13B active) have sparse expert routing. This complicates attention patterns. Flash Attention 2 still reduces KV cache VRAM. The routing overhead means savings are closer to 25-30% vs. 35-40% on dense models. Test with your specific MoE quant.

Q: Docker on macOS — does Flash Attention work?

No. macOS builds use Metal, not CUDA or ROCm. Flash Attention 2 requires the CUDA or ROCm kernel paths. Apple Silicon Macs have their own memory efficiency tricks. They don't use Flash Attention 2 specifically. The env var is ignored on macOS.

When to Walk Away: Flash Attention Won't Fix Your Real Problem

If you're hitting OOM at 4K context on a 24 GB card, Flash Attention isn't your bottleneck. Check your quant level — Q8_0 or FP16 won't fit with any attention algorithm. Or you're loading the wrong model size: 70B at Q4_K_M needs ~40 GB, not 24 GB.

Flash Attention solves the long-context scaling problem, not the base model fit problem. It won't compress weights, won't reduce activation memory for large batches, and won't make a 70B model run on 24 GB.

For that, you need smaller models, more aggressive quants (IQ4_XS, IQ1_S), or more VRAM. See our Ollama review for model sizing guidelines.

But if you've got the hardware, set the scope right, and still see flat VRAM scaling — now you know exactly where to look.