Quick Summary

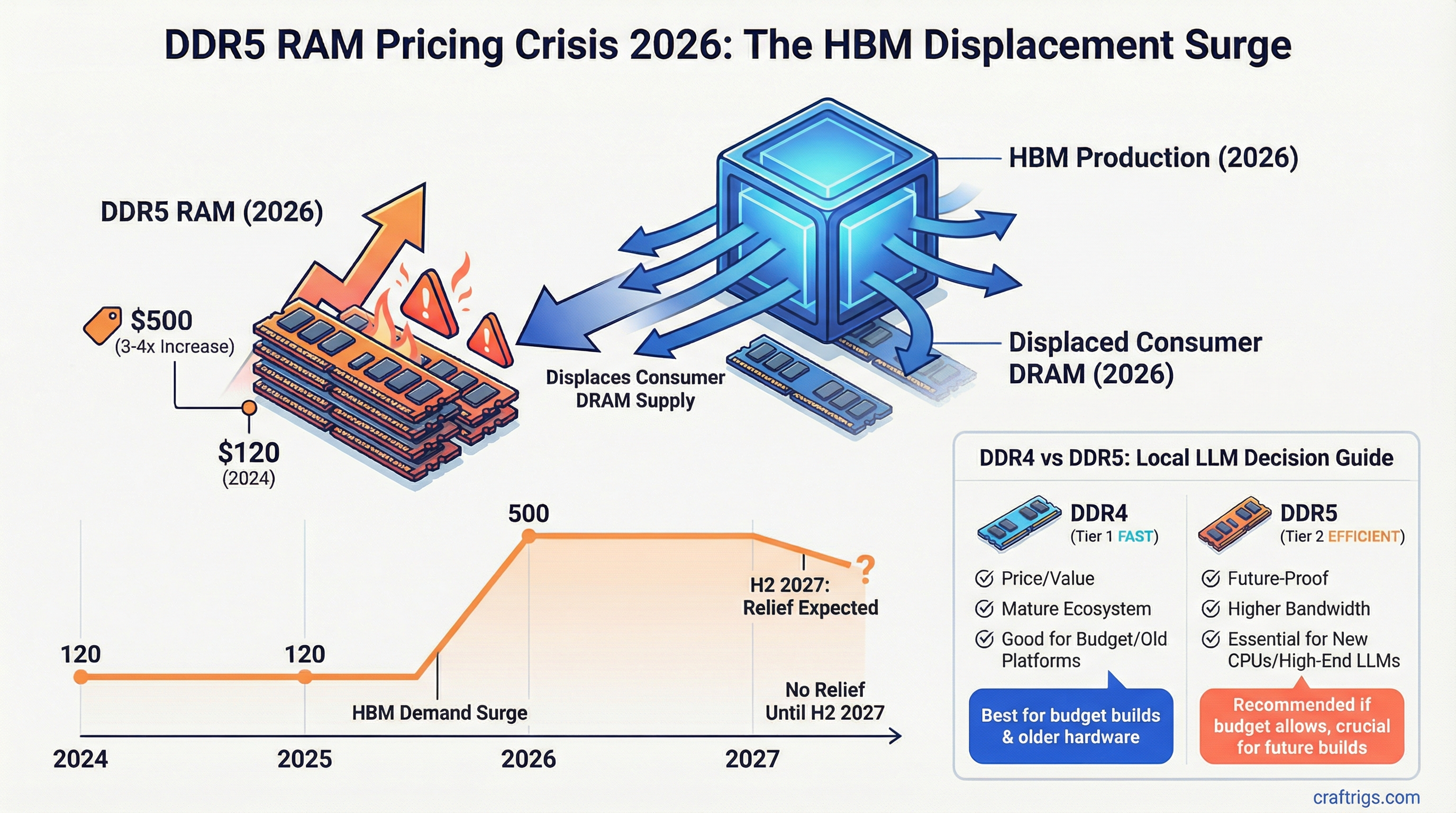

- The price: DDR5 32GB kits went from $80-120 (mid-2025) to $300-500 (March 2026) — a 3-4x increase driven by HBM production displacing consumer DRAM

- The timeline: Gartner projects no significant relief until H2 2027; builders planning now should treat current DDR5 pricing as the baseline

- The workaround: DDR4 platforms (AM4, LGA1200) are budget-sane today; DDR5 only if you're building long-term and can absorb the premium

If you priced out a DDR5 build six months ago and are reconsidering now, that budget spreadsheet is wrong by $200-400. DDR5 32GB kits that were sitting at $80-120 in mid-2025 are now $300-500 at major retailers. 64GB kits have crossed $600. This isn't a short-term supply blip — it's a structural shift in how DRAM fabs are allocating wafer capacity, and it won't resolve quickly.

Here's what happened, what it means for builders, and what you should actually do.

The Root Cause: HBM Is Eating Consumer DRAM's Lunch

The AI accelerator boom created demand for High Bandwidth Memory that DRAM manufacturers were not prepared to supply at scale. HBM3e — the memory stacked on NVIDIA H200s, AMD MI300Xs, and upcoming Blackwell variants — uses the same DRAM fabrication equipment as consumer DDR5, but requires significantly more complex packaging (stacking dies with through-silicon vias).

Samsung, SK Hynix, and Micron collectively control nearly all DRAM production globally. When NVIDIA started placing H100/H200 HBM orders at volumes that justified a capacity shift, these manufacturers made the rational economic decision: HBM commands 5-8x the price per gigabyte of consumer DRAM. Shifting wafer starts toward HBM while consumer DRAM supply contracts results in exactly what we're seeing.

The specific Samsung data point that spooked the market: Samsung raised contract DDR5 prices from approximately $7 per unit to $19.50 per unit across late 2025. That's not a typical quarterly fluctuation — it's a pricing reset driven by capacity scarcity. SK Hynix and Micron followed with similar adjustments.

Why This Hits DDR5 Harder Than DDR4

DDR4 production has largely moved to older, fully depreciated fabrication nodes. The equipment costs are paid off, yields are mature, and there's no competitive pressure to reallocate those lines toward HBM (which requires cutting-edge nodes). DDR4 pricing has stayed relatively flat — 32GB kits are still available in the $60-90 range, 64GB kits at $120-160.

DDR5 is the problem because it shares modern node capacity with HBM. The same 1z and 1a nm class DRAM nodes that produce DDR5 are the ones being redirected toward HBM stacks. As long as AI accelerator demand remains strong — and nothing in the current market suggests it's slowing — those capacity redirections stay in place.

What This Actually Costs You as a Builder

Run the numbers on a mid-range DDR5 LLM inference rig:

Platform A — AM5 DDR5 (March 2026 pricing)

- AMD Ryzen 7 9700X: ~$320

- B650 motherboard: ~$180

- 64GB DDR5-5600 (2x32GB): ~$580-620

- Total platform cost: ~$1,080-1,120

Platform B — AM4 DDR4 (March 2026 pricing)

- AMD Ryzen 7 5700X: ~$150

- B550 motherboard: ~$120

- 64GB DDR4-3600 (2x32GB): ~$130

- Total platform cost: ~$400

The AM4 platform is $680 less — enough to cover an RTX 4060 Ti 16GB, which delivers 16GB VRAM and massively outperforms CPU-only inference for anything under 34B parameters.

For GPU-accelerated inference specifically, system RAM has minimal impact on model performance. If your models are running on GPU VRAM, 32GB DDR4 system RAM is entirely sufficient. The $450+ you'd spend on DDR5 is better directed at a higher VRAM GPU.

The Cases Where DDR5 Still Makes Sense

CPU-only inference for very large models is the legitimate DDR5 use case right now. If you're running 70B+ models without discrete GPU acceleration — either because you're using CPU offloading in llama.cpp or because you're on a platform like Xeon or Threadripper that doesn't have a clear GPU path — then DDR5's higher bandwidth (51.2 GB/s vs DDR4's 25.6 GB/s) provides meaningful throughput improvements.

Current price tracking:

- DDR5-5600 32GB (2x16): ~$160-200

- DDR5-5600 32GB (1x32): ~$180-220

- DDR5-5600 64GB (2x32): ~$340-420

- DDR5-6000 64GB (2x32): ~$380-480

Long-term platform investment is the second case. AM5 is AMD's socket through at least 2027-2028 by their public roadmap. If you're building a rig you'll upgrade over 4-5 years, paying the current DDR5 premium makes sense because the platform longevity offsets the upfront cost. Just don't do it expecting a performance payoff today — the LLM inference gains over DDR4 are marginal unless you're CPU-only on very large models.

See our DDR5 vs DDR4 local AI guide for detailed bandwidth benchmarks, and how much RAM you actually need for model sizing guidance. For a complete budget build that avoids DDR5 costs entirely, see our $500 local LLM PC build guide.

If you're building an inference rig in spite of current DDR5 prices, the $1,200 local LLM build guide covers how to balance GPU VRAM against platform cost — including when DDR4 on AM4 is the better financial decision. For understanding how system RAM interacts with VRAM during inference (including when RAM becomes the bottleneck), see our CPU+GPU hybrid inference guide.

When Will Prices Recover?

Gartner's semiconductor team projects no meaningful consumer DRAM price relief until H2 2027. The logic: HBM capacity additions require 18-24 months from investment decision to production. The large capacity investments announced in late 2025 won't yield production output until late 2026 at earliest, with market impact in 2027.

There are scenarios for faster recovery. If AI infrastructure spending contracts — either from a broader economic slowdown or from AI investment sentiment shifting — HBM demand could soften and free up capacity. Some analysts see early signs of this in Q1 2026 capex guidance from hyperscalers, but "some analysts" is not a planning assumption.

The conservative planning posture: DDR5 pricing at $350-500 for 64GB is the floor for 2026 builds. Budget accordingly or choose DDR4.

Practical Guidance for Builders in March 2026

Building now, GPU-accelerated inference: Use AM4/DDR4. The $680 platform savings goes into VRAM where it actually improves inference performance. A 5700X + B550 + 32GB DDR4 + RTX 4070 Ti Super 16GB outperforms any DDR5 CPU-only setup for under the same total budget.

Building now, CPU-only large models (70B+): DDR5 bandwidth matters here. Accept the current pricing or wait. If budget is hard-constrained, AM4 with 128GB DDR4 still works — it's slower but functional for overnight batch runs.

Waiting for price drops: Plan for late 2026 if you're optimistic, 2027 if you're realistic. The market data doesn't support a near-term recovery.

Already have AM5 hardware: Add the DDR5 and move on. The platform investment is made, and paying current prices for memory on an existing build is more defensible than starting over.

The DDR5 pricing crisis is real and structural. Build your budget around current pricing, not mid-2025 pricing, and make component decisions accordingly. For the full picture of how memory costs fit into a complete inference rig budget, see our $1,200 local LLM build guide.

DDR5 Pricing Factors

graph LR

A["NAND Supply Cuts"] --> D["DDR5 Price Spike"]

B["AI Server Demand"] --> D

C["HBM Allocation Shift"] --> D

D --> E["Consumer Kits +40%"]

D --> F["Server RAM +60%"]

E --> G["Local LLM Builders Affected"]

F --> H["Data Centers Pause Upgrades"]

style D fill:#EF4444,color:#fff

style A fill:#1A1A2E,color:#fff

style B fill:#1A1A2E,color:#fff

style C fill:#1A1A2E,color:#fff