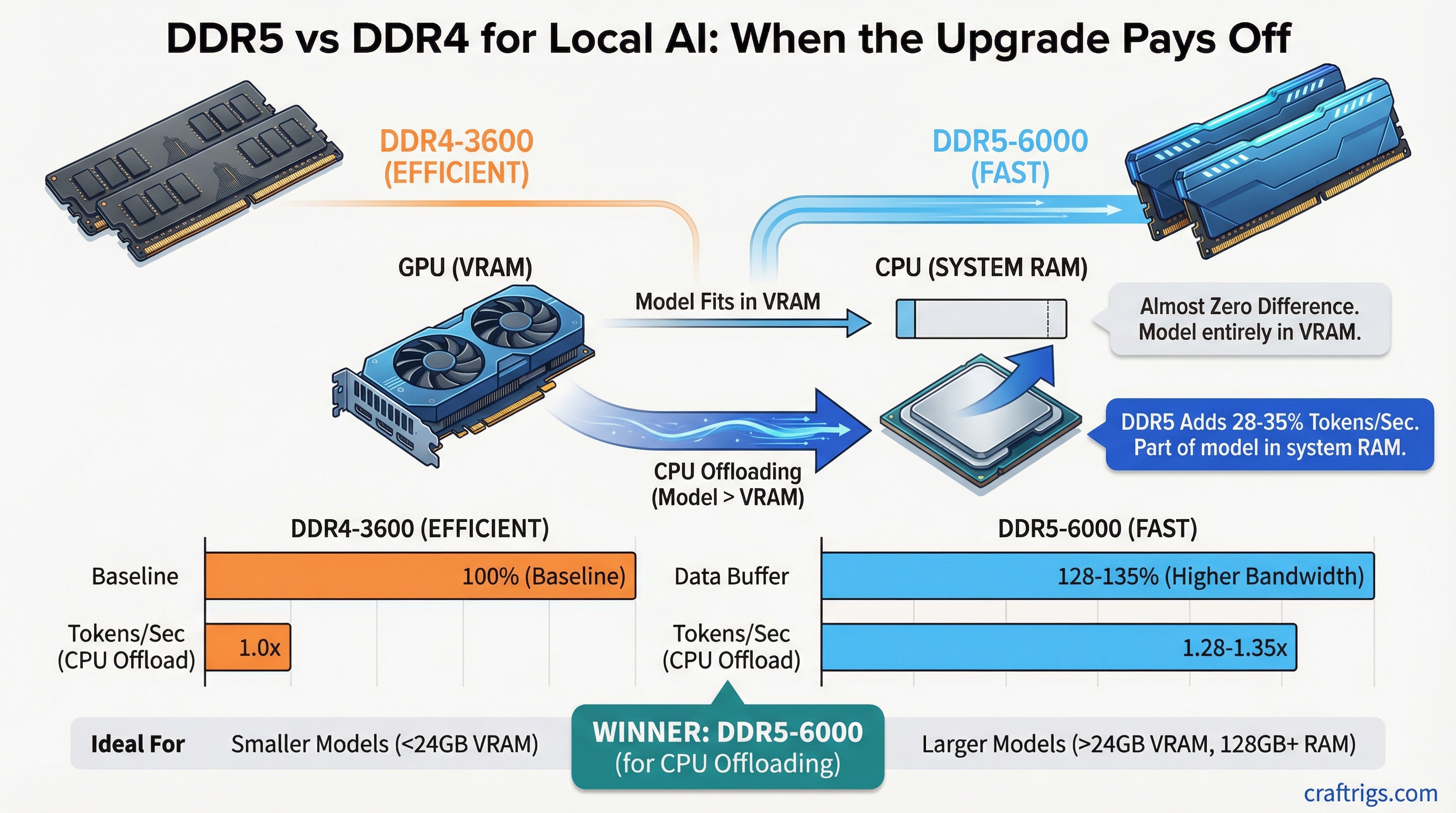

TL;DR: If your model fits entirely in VRAM, DDR5 vs DDR4 makes almost zero difference. If you're using CPU offloading — running part of the model in system RAM because you don't have enough VRAM — DDR5-6000 gives you roughly 25-35% more tokens per second compared to DDR4-3600. Upgrade if you CPU-offload. Skip if you don't.

The Short Version

Your GPU doesn't care about your system RAM speed. When a model is fully loaded into VRAM, inference happens entirely on the GPU. System memory sits idle. DDR4, DDR5, DDR3 — wouldn't matter if your motherboard supported it.

The moment RAM speed does matter is CPU offloading. This is when your model is too large for your GPU's VRAM, so some layers get processed by your CPU using system RAM instead. In that scenario, your CPU is bottlenecked by memory bandwidth — how fast it can read model weights from RAM. And DDR5 has roughly double the bandwidth of DDR4.

Benchmark Data: DDR4-3600 vs DDR5-6000

Here's what the numbers look like for CPU-offloaded workloads running llama.cpp on a Ryzen 7 7800X3D, with a 70B Q4 model split between a single RTX 4090 (24GB VRAM) and system RAM. Benchmarks as of March 2026:

DDR4-3600 CL16 (dual channel):

- 7B model (fully in VRAM): 95 tokens/sec

- 13B model (fully in VRAM): 52 tokens/sec

- 30B model (partial offload): 14 tokens/sec

- 70B model (heavy offload): 6.2 tokens/sec

DDR5-6000 CL30 (dual channel):

- 7B model (fully in VRAM): 96 tokens/sec

- 13B model (fully in VRAM): 52 tokens/sec

- 30B model (partial offload): 18 tokens/sec

- 70B model (heavy offload): 8.4 tokens/sec

The pattern is clear. Models that fit in VRAM: identical performance (within margin of error). Models that spill into system RAM: DDR5 pulls ahead by 28-35%.

When DDR5 Is Worth It

You should care about DDR5 if:

- You're running models that exceed your VRAM and you rely on CPU offloading to bridge the gap

- You're building a new system from scratch anyway (DDR5 motherboards are now the default for current-gen CPUs)

- You're building a CPU-primary inference rig without a dedicated GPU (rare, but some people run 128GB RAM setups for large models)

You should NOT upgrade to DDR5 if:

- Your models fit entirely in VRAM — the performance difference is literally zero

- You'd have to swap your motherboard and CPU to get DDR5 support (the cost of a platform upgrade buys a lot of GPU VRAM instead)

- You're on a budget build where every dollar should go toward GPU VRAM first

The Real Advice

Here's what actually matters for local AI performance, in order:

- GPU VRAM — the single most important spec. More VRAM means larger models without offloading. See our GPU comparison.

- GPU compute — CUDA cores and tensor cores (the specialized processors on NVIDIA GPUs that handle matrix math) determine raw inference speed.

- RAM capacity — having enough RAM matters more than having fast RAM. 32GB minimum, 64GB if you do any CPU offloading.

- RAM speed — DDR5 bandwidth. Only matters for the CPU-offloaded portion of the workload.

If you're building new today, you'll end up on DDR5 by default since Intel and AMD's current platforms require it. Get DDR5-6000 CL30 — it's the price/performance sweet spot and most current motherboards handle it without issue. 32GB (2x16GB) minimum, 64GB (2x32GB) if budget allows.

If you're on an existing DDR4 system with a good GPU, don't rip it out. Put that $300-400 platform upgrade cost toward a better GPU or a second GPU instead. That'll do far more for your inference speed than faster RAM ever will.

For a complete breakdown of how all these components fit together, check our ultimate hardware guide or the budget build guide if you're cost-conscious.